The Validity of Man-made Atmospheric CO2 Buildup (Part I in an occasional series challenging ‘ultra-skeptic’ climate claims)

By Chip Knappenberger -- March 18, 2009In the realm of climate science, as in most topics, there exists a range of ideas as to what is going on, and what it means for the future.

At the risk of generalizing, the gamut looks something like this: Ultra-alarmists think that human greenhouse-gas-producing activities will vastly change the face of the planet and make the earth inhospitable for humans; they therefore demand large and immediate action to curtail greenhouse gas emissions.

Alarmists understand that human activities are changing the earth’s climate and think that the potential changes are sufficient to warrant some pre-emptive action to try to mitigate them.

Skeptics think that humans activities are changing the earth’s climate but, by and large, they think that the changes are not likely to be terribly disruptive (and even could be, in net, positive) and that drastic action to curtail greenhouse gas emissions is unnecessary, difficult, and ineffective.

Ultra-skeptics think that human greenhouse gas-producing activities are impacting the earth’s climate in no way whatsoever.

Most of my energy tends to be directed at countering alarmist claims about impending climate catastrophe, but the scientist in me gets just as bent out of shape about some of the contentions made by the ultra-skeptics, which are simply unsupported by virtually any scientific evidence. Primary among these claims is that human activities are not responsible for the observed build-up of atmospheric carbon dioxide. This is just plain wrong.

We have good measurement of how much carbon dioxide is building up in the atmosphere each year, and we have good estimates of how much carbon dioxide is being emitted from human activities each year. It turns out that there are more than enough anthropogenic emissions to account for how much the atmosphere is accumulating. In fact, the great mystery concerns the “missing carbon,” that is, where exactly is the extra carbon dioxide going that is emitted by humans but that doesn’t end up staying in the atmosphere. (Only about half of the human CO2 emissions end up accumulating in the atmosphere; the rest end up somewhere else—in the oceans, in the terrestrial biosphere, etc.)

In my opinion, it would be much more useful for folks interested in the carbon cycle to try to better understand the behavior of the CO2 sinks and how that behavior may change in the future (if at all) rather than in trying for come up with sources of CO2 other than human activities to explain the atmospheric concentration growth—as it is, we already have too much, not too little.

What this means is: The argument that the increase in atmospheric CO2 results from a natural temperature recovery from the depths of the Little Ice Age in the mid-to-late 1800s just doesn’t work.

In fact, all lines of evidence are against it.

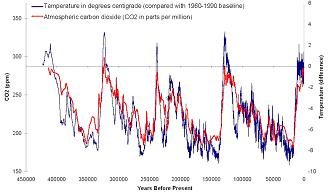

This argument has its foundation in the carbon-dioxide and temperature trends of the past 400 to 600 thousand years, which we know from air bubbles trapped in ice that has been extracted from ice cores taken in Antarctica and Greenland. Basically, the data from the ice cores show that periods when the earth’s climate has been warm are also periods when there have been relatively higher CO2 concentrations (Figure 1).

Al Gore uses this to say that the higher CO2 caused the higher temperatures; ultra-skeptics counter by pointing out that, if you look closely enough, you’ll see that the temperature rises before the CO2 rises, so rising temperatures cause rising CO2, not vice versa.

The fact is that both interpretations are correct—rising temperatures led to rising CO2, which led to more rising temperatures. But the only relevance that this has to the current situation is that this natural positive feedback between temperature variations and CO2 variations didn’t run away in the past, and so we shouldn’t expect it to run away now. It carries no relevance as to what is causing the ongoing increase in atmospheric CO2 concentrations.

Fig. 1. Variability in atmospheric CO2 (red, left-hand axis) and temperatures (blue, right-hand axis) derived from ice core air samples extending back more than 400,000 years.

But anyone who looks at the data (shown in Figure 1) will see that no matter which caused the other, the changes in temperature from ice-age cold to interglacial warmth are about 10ºC while the change in CO2 is about 100ppm. Since the late 1800s, the temperature has warmed a bit less than 1ºC , while the CO2 concentration has increased by a bit less than 100ppm. In other words, the natural, historical relationship between CO2 and temperature is about 10 times weaker than that observed over the past 100 or so years. Thus, there is no way that the temperature rise from the Little Ice Age to the present can be the cause of an atmospheric CO2 increase of nearly 100ppm—the reasonable expectation would be about 10ppm. This line of reasoning is off by an order of magnitude.

And where do ultra-skeptics think the CO2 building up in the atmosphere is coming from, if not from humans? Their answer is typically “the oceans”—as the oceans warm, they outgas carbon dioxide. While this is certainly true, an opposite effect is also ongoing—a greater concentration of CO2 in the air drives more CO2 into the oceans. One way of determining how much CO2 is dissolved in the oceans is to observe the pH of the ocean waters. Long-term trends show a gradual decline in ocean pH (the source of the ocean “acidification” scare—the subject of a future MasterResource article). This means that the ocean is gaining more CO2 than it is losing. So, it can’t be the source of the large CO2 increase observed in the atmosphere.

Another way to figure out where the extra CO2 that is now part of the annual flux is coming from is through an isotopic analysis. CO2 that is released from fossil fuels carries a different (lighter) molecular weight than that which is usually part of the annual CO2 flux from land and oceans and atmosphere. CO2 released by fossil fuel has a lower 13C/12C ratio than does most other CO2 and long-term records show that the overall 13C/12C ratio in the atmosphere has been declining—an indication that an increasing proportion of atmospheric CO2 is coming from fossil fuel sources.

So there are (at least) three independent methods of determining the source of the extra CO2 that is building-up in the atmosphere, and all three of them finger fossil-fuel combustion as the primary culprit.

Yet, despite the overwhelming scientific evidence, the ultra-skeptics persist on forwarding the concept that the observed atmospheric CO2 growth is not caused by human actions. And sadly, since this notion is extremely pleasing to those folks (politicians et al.) who are actively fighting legislation aimed at limiting greenhouse gas emissions, it is widely incorporated into their stump speeches. Some even go so far as to suggest that the respiration of 6.5 (and growing) billion humans plays a role in the CO2 increases—again, pure nonsense. We humans breathe out only what we take in, and since we eat plants, which extract atmospheric CO2 for their carbon source in producing carbohydrates, it is a completely closed loop. (Now if we ate coal, or drank oil, perhaps things would be different.)

Fighting bad science with bad science is just a bad idea. There are numerous reasons to oppose restrictions on greenhouse gas emissions, but the notion that they aren’t contributing to increasing atmospheric concentrations of carbon dioxide isn’t one of them.

In future articles, if I have time between combating alarmist outbreaks, I may point out some other ultra-skeptic fallacies—such as, “The build-up of atmospheric greenhouse gases isn’t responsible for elevating global average surface temperatures” or “Natural variations can fully explain the observed ‘global warming’.”

Stay tuned.

I think you misrepresented to some extent typology of sceptics. You actually presented teh false dilemma: either you believe that recent temperature rise is due to human CO2 or you are complete nut believing that Co2 rise in previous 50 or so years was nto due to the humans. But this is complete straw man argument – critical issue is whether human CO2 give a rise to the temperature. Many serious scientists, like Roy Spencer believe that recent temperature rise was not caused by human CO2 but by natural fluctuation in climate, such as PDO regime changes efffectuated by clouds.

At the present we cannnot say for certain whether and to what extent human Co2 contributed to temeprature rise in the second part of XX century. Magnitude of the warming was similar to that in the first part of century when even IPCC says it could not be caused by humans. Thus far, according to everything we know, range of global warming was within the bounds of natural climate variability. Apart from that, characteristic signature of greenhouse warming, tropical hotspot is entirely absent from any data set. In previous 13 or 14 years we had no statistically significant warming which is contradictory to model’s typical prediction of constant rate of warming on the decadal basis (see World Climate Report 🙂 ).

In such a situation, the claim that human CO2 is culprit of warming is the only thing we could dubbed “falacy”. Of cource, we cannot rule out completely that some portion of warming was caused by CO2, but we have no proof for that, and we have even less justification that CO2 was “priamry climate driver” . this is pure model specualtion without any justification. But, if this is so, then we have no basis to dismiss “radical sceptic’s” claims in the way you do.

Having in mind all circumstances AGW thesis doesn’t look much plausible. Occam’s Razor says that we already have pretty simple and plausible explanation for slight global warming 1977-1998 – small natural, internal climate variations. At the minimum, we don’t need AGW dogma in order to explain that warming. The burden of proof here is on the side of alarmists and you “moderate sceptics”.

The only evidence you gave for the thesis that CO2 was driver of climate change is that CO2 concentration increased due to the humans during XX century! But that is complete straw man! As if the only way to disprove AGW dogma was to deny that humans are causing CO2 rise. But, allmmost none of them do that. Instead, most of the serious “ultra-sceptics” concede that humans increase CO2 in atmosphere, but add that such an increase has no significant impact on temperature because of the way atmosphere reacts to such a changes (feedbacks). Small negative feedback in terms of 2% increased low cloud cover could offset ALL global warming from doubling CO2.

Chip,

Spencer’s slide shows that PDO plus ordinary CO2 effect could potentially acount for all global warming since 1970s onwards. But, we don’t actually know net results of CO2 forcing, because of uncertain nature of feedbacks. If feedbacks are strongly negative (as Spencer seems to suggest) net result of CO2 increase could be net cooling. So Spencer doesn’t assert that CO2 caused any portion of recent warming. At the contrary he is convinced, from his anlysis of satellite data, that its roel is minor. But, nobody knows for certain. Nobody even knows what natural mechanisms are involved in climate change and how.

But I have reacted primarily to your dismisal of thesis that warming has nothing to do with CO2 as “fallacy”. On Spencer’s blog you have many assertions that global warming is not man-made, while on World Climate Report you have assertions that it is. Obviosly, both assertions are at the present speculations without definitive proof. I am convinced that “balance of evidence” suggests that role of the CO2 is minor at best, but the problem is that you took AGW thesis as proven beyond the reasonable doubt, which it is not in the slightest. You have no basis at this moment to declare belief in natural causes of recent warming as “fallacy”.

Richard Courtney,

Indeed.

Human emissions are more than enough to account for the CO2 build-up. You need look no further.

You present a bunch of numbers, most of which are only marginally related to the issue at hand. We know how much CO2 is building up in the atmosphere each year, which is the difference between what was added and what was removed. Since this difference is less than the amount that humans added, it hardly matters the magnitude of the other processes—we already have a big enough source.

Think of the total carbon dioxide in the active system (not counting that which is locked up in fossil fuels) as air in a big balloon. Think of me standing in the middle of the big balloon breathing in an out. No matter how fast I breathe, nor whether or not you join in the balloon, the total air in the balloon doesn’t change. Think of us as the natural flux. Now, think of sticking a hose through the balloon, through which flows a small additional amount of air. Slowly, the total amount of air in the balloon increases, even though we continue to breathe in and out the same amount as before. The air coming through the hose represents CO2 released through the burning of fossil fuels (CO2 which was not in the active system initially). This is conceptual model of what is going on. If you want to simulate the effect of sinks, poke a small hole in the balloon. As long as more air comes in through the hose than exits through the hole, air will build up in the balloon—just as CO2 does in the atmosphere—irregardless of the size of the natural flux.

-Chip

Those who say that humans have not caused increased atmospheric CO2 may be just as extreme as those who defend the predictions of highly flawed climate models. However, the latter are infinitely more dangerous.

Chip,

I agree certainly that increased CO2 theoretically should lead to higher temperatures, CETERIS PARIBUS. But, that CB is critical element – we simply don’t know what would be like climate and global temperature without rising CO2 concentrations. And we don’t know how and how much atmoshpere reacts on elevated CO2 concentrations. We already observed in first half of XX century and in earlier periods as well, similar in magnitude or even greater global warming trends than this in last 30 or so years. Of course, that doesn’t mean natural reasons are culprit now as well. It is theoretically quite possible that summa of all natural factors in this most recent period would lead to global cooling in absence CO2 increase (IPCC actually favors that possibility). But, at the present moment we don’t have the slightest idea what would be like the climate without human CO2. If this is so, atribution of the resent warming to human CO2 is highly speculative enterprise.

I know that there are some serious arguments that point to possibility of CO2 driven warming, such as greatest rate of warming in cold continental masses in Asia and North America, and higher rate of nightime warming than daytime. But, even you and prof Michaels at WCR cast some serious doubt on that, by analyzing some new data and analyses that show contrary evidence in this regard. My conviction, for reasons mentioned in my initial post are that CO2 doesn’t play significant role in climate change, but I concede that this assertion is also speculative. Maybe the most appropriate summary will be to concede that we know very little at the present to attribute global warming to any particular cause.

And let me emphasize, I am not neither convinced nor too enthusiastic in regard of other mono-causal theories such as Solar, or Cosmic rays. Although know that many sceptics endorse those theories I think they are also at the present just interesting hypotheses without any rigorous proof or at least fundamental breakthrough.

Ivan-Negative feedback absolutely does not mean that CO2 causes cooling. If that were true, rather than high insolation during interglacials causing, well, interglacials, you’d get glaciation periods. Negative feedback simply means that as temperatures rise, nature acts to compensate for the warming effect, reducing the rate, not reversing its sign. That would be really scary. I tend to agree that its difficult to attribute trends, and I’m sure Chip does too. But CO2 will cause some warming-the big question (to my mind) is “how much?” I actually think that, by themselves, elevated GHG levels can explain most warming-But there is also the more uncertain set known of forcings to consider, which could offset that tendency, leaving a larger part of the warming to be simultaneously explained by other effects. My own view is that, as study of the various forcings continue, we’ll find that the positive forcings have been underestimated (including natural forcings) and negative forcings overestimated, meaning that the climate is fairly insensitive-and catastrophic warming is highly unlikely.

Chip,

Temperature increase over the last 100 years. Based on what? The same temperature data series that have overestimated the temperature increase since 1979 when compared to satellite temperature change? Why should anybody have any confidence in the data prior to 1979? To the tenths of a degree? The surface global data bases all suffer from: 1) Station drop-out; 2) Insufficient adjustment for urbanization; 3) Land use changes and improper siting; 4) Questionable handling of missing data; 5) Consider that 70% of the world surface is covered by oceans and only a small fraction of the ocean temperature was actually measured by thermometers, and the quality and reliability of the data measured is problematic.

More than 90,000 accurate chemical analysis of CO2 in air from 1812 to 1958 have been analyzed by Beck . This historic data reveals that, in contrast to the IPCC post -1990 data on climate change, CO2 concentration in air has fluctuated, exhibiting three high level maxima around 1825, 1857, 1942. The latter showing more than 400ppm. From 1857 onward the Penttenkeofer process was the standard method for determining the CO2 levels with accuracy better than 3%

Prior to 1986 most studies of gas in ice cores showed pre industrial concentrations of CO2 higher than the current atmospheric levels, up to 2450 ppm.

John

“In future articles, if I have time between combating alarmist outbreaks, I may point out some other ultra-skeptic fallacies—such as, “The build-up of atmospheric greenhouse gases isn’t responsible for elevating global average surface temperatures” or “Natural variations can fully explain the observed ‘global warming’.””

Oh, another person that claims to know for CERTAIN that “greenhouse gases” cause warming, eh? I can hardly wait. I hope you at least admit to a certain amount of uncertainty. If not, I hope your physics is better than, say, Gerlich and Tscheuschner.

jae-If you really believe that increasing CO2 will cause zero warming (zip, nada, nothing) rather than just a quantitatively small amount, perhaps you should take it up with, say, Lindzen or Christy, both very good atmospheric scientists (and skeptics) who could tell you exactly why the disagree (and they do).

Chip,

I would hope your next article also addresses the issues below.

1. What is the climate’s sensitivity (global temperatures) to CO2?

2. Is answer to number 2 related in anyway to the logarithmic concept of CO2’s affect on temperatures?

3. With the recent admission that Chinese temperature readings suffer from a 1 degree/century UHI bias, what would be a reasonable number to discount the recent global warming trend by?

4. I assume you agree that climate, hence temperatures, change naturally. What portion of recent warmings would you attribute to natural warming?

5. World Climate Report has had multiple postings on past time periods where proxies show temperatures being at a higher level than current ones. Did atmospheric CO2 cause these temperature increases in each or were these increases of “natural” cause and/or variability?

C3H Editor

Mr. Bartner,

I went back and read my article and nowhere do I use ice core measurements to back up the fact that atmospheric CO2 concentrations are rising, or that human fossil fuel consumption is the primary cause. In fact, I used ice core measurements the very same way you did—as evidence of the past relationship between temperature and CO2. You can’t very well spend one large portion of your comment saying what is wrong with ice core measurements and then turn around a few paragraphs later and say that they provide useful information on past CO2/temperature relationships. How does that work?

And as far as the oceans go, you simply repeated the fallacy that I pointed out in my article. The oceans are not a net source of CO2 during the past 50 to 100 years. I provided several independent lines of evidence (including pH and isotope trends) against your idea that they are.

Your final points pertain to greenhouse gases and global temperatures, which will be the subject of a subsequent article that I’ll write for MasterResource. So, if your still have these concerns, perhaps we can discuss them then—and based upon the nature of them, I am sure you will, because I agree with few of them.

The one I do agree with is that the investigation of the climate sensitivity to rising greenhouse gas levels is a central issue that needs additional work. I am glad that folks like Dr. Spencer are putting their expertise to the task—but the work of Dr. Spencer and other is still a long way from being completed. I, like you, tend to think that sensitivity in climate models is a bit too high.

-Chip

Mr Knappenberger:

I suppose that I am an Ultra-skeptic according to your definition because I do not know what if any proportion of the recent rise in atmospheric carbon dioxide concentration is anthropogenic. But I would like to know. Unfortunately, the data does not tell us.

For an explanation of the carbon cycle please see our paper

Rorsch A, Courtney RS & Thoenes D, ‘The Interaction of Climate Change and the Carbon Dioxide Cycle’ E&E v16no2 (2005)

And you display a misunderstanding when you say;

“”In fact, the great mystery concerns the “missing carbon,” that is, where exactly is the extra carbon dioxide going that is emitted by humans but that doesn’t end up staying in the atmosphere. “

The carbon in the air is less than 2% of the carbon flowing between parts of the carbon cycle. And the recent increase to the carbon in the atmosphere is less than a third of that less than 2%.

The estimates of the flows of carbon between the parts of the carbon cycle are very gross and mostly assumptions. Indeed, the flows between deep ocean and ocean surface layers are completely unknown and it is not possible even to estimate them.

The carbon in the ground as fossil fuels is estimated to be 5,000 GtC and humans are transferring it to the carbon cycle at a rate of 6.5 GtC per year.

In other words, the annual flow of carbon into the atmosphere from the burning of fossil fuels is less than 0.02% of the carbon flowing around the carbon cycle.

It is not obvious that so small an addition to the carbon cycle is certain to disrupt the system because no other activity in nature is so constant that it only varies by less than +/- 0.02% per year.

Also, the system does not ‘know’ where an emitted CO2 molecule originated and there are several CO2 flows in and out of the atmosphere that are much larger than the human emission. The total CO2 flow into the atmosphere is at least 156.5 GtC/year with 150 Gt of this being from natural origin and 6.5 Gt from human origin. So, on the average, about 2% of all emissions accumulate.

Our considerations of available data strongly suggest that the anthropogenic emissions of CO2 will have no significant long term effect on climate. The main reason is that the rate of increase of the anthropogenic production of CO2 is very much smaller that the observed maximum rate of increase of the natural consumption of CO2.

It is sometimes suggested that isotope studies support an anthropogenic cause of the recent rise in atmospheric CO2 concentration. In fact, the isotope data suggests an oceanic origin for the rise: Ahlbeck and Quirk each has independent studies which indicate this in process of publication.

Our paper (cited above) uses attribution studies to assess determine if natural (i.e. non-anthropogenic) factors may be significant contributors to the observed rise to the atmospheric CO2 concentration. These considerations indicate that any one of three natural mechanisms in the carbon cycle alone could be used to account for the observed rise. The study provides six such models with three of them assuming a significant anthropogenic contribution to the cause and the other three assuming no significant anthropogenic contribution to the cause. Each of the models matches the available empirical data without use of any ‘fiddle-factor’ such as the ‘5-year smoothing’ the UN Intergovernmental Panel on Climate Change (IPCC) uses to get its model to agree with the empirical data.

So, if one of the six models of ourpaper is adopted then there is a 5:1 probability that the choice is wrong. And other models are probably also possible.

And the six models each give a different indication of future atmospheric CO2 concentration for the same future anthropogenic emission of carbon dioxide.

This indicates that the observed rise may be entirely natural; indeed, it suggests that the observed recent rise to the atmospheric CO2 concentration most probably is natural. Hence ‘projections’ of future changes to the atmospheric CO2 concentration and resulting climate changes have high uncertainty if they are based on the assumption of an anthropogenic cause.

Richard

Question #2 above should read:

2. Is answer to [question] number 1 related in anyway to the logarithmic concept of CO2’s affect on temperatures?

Andrew, you are right concerning, maybe I expressed myself little bit imprecise (English is not my mother tongue 🙂 ). Of course, negative feedback do not mean absolute cooling, but cooling compred with “only Co2” censitivity which is somethig like 1 deg C. That was my contention, actually summary of Spencer’s finding that “if feedbacks are strongly negative CO2 forcing is to small to cause a warming”. Which is to say that negative feedbacks could make overall CO2 sensitivity much smaller thand 1 deg C.

P.S. “concerning feedbacks” in the first sentence above, typo, sorry.

And, Andrew I certainly agree that attribution is extremely difficult at this moment. And precisely that was the reason why I reacted when Chipp described natural warming hypothesis as “fallacy” because that implied we actually could attribute warming to greenhouse effect beyond the doubt.

In your arguments against “extreme“ deniers, you make several bad assumptions.

First error: that one can get accurate measurements of past CO2 concentrations from ice core sampling. Please read Z. Jaworowski, T. V. Segalstad and N. Ono “Do glaciers tell a true atmospheric CO2 story ”The Science of the Total Environment”, 114 (1992) 227-284. In this article, the authors discuss the 20 some mechanical and chemical processes occurring in glaciers that alter CO2 concentrations; plus the extreme cherry picking of data THAT THE IPCC uses to support their contention that pre-industrial CO2 level were a flat 270-280 ppm in the 1800s.

The following are just 2 of those processes that play major roles in gas concentrations in glaciers. 1) Very thin ponds of melted ice form every summer a meter below the surface ice in Antarctica when the sun does not set. It is estimated that UV solar radiation can penetrate 1 M below the surface of snow where the heat generated is trapped by the insulating ability of snow. This heat causes the formation of ponds which have been reported in literature. In addition, the thin ice sheets observed in ice cores resulted from the freezing of these ponds in winter; now deeper, they never melt again. Why is this important? CO2 is 25 times as soluble as O2 and 50 times soluble as N2 in ice water. This will pull CO2 out of trapped air surrounding the ponds creating pockets of high CO2 content in the water while leaving behind depleted air samples outside the water. Up until 1985, literature showed measured values of CO2 ranging from 150 ppm to 700 ppm with outriders over a thousand. After 1985, only politically correct values were reported. Dr. Jaworowski claims that cherry picking is required to obtain the desire result; he claims that up to 40% of the observed data is excluded from consideration. 2) How many people know that large amounts of fluid are pump down the sides of the drill to enable drilling; this fluid is supposed to maintain density (failed) and to lubricate and act as an antifreeze. At the most important site, Vostok, jet fuel is the base component with tetra and tri chloro ethane and mono ethyl ether of gycol added. Just when the ice core is cracking under the thermo stress of the drilling method used at Vostok, coupled with the cracking caused by the expansion of the ice core when the massive weight above is removed, the ice core comes into contact with this necessary but foreign fluid mixture plus its impurities. Gross contamination results, but we hear nothing about this from the IPCC. In addition, these fluids make a fine reservoir for escaping gas.

Have you ever asked why no ice core measurements for the last 50 years are included in IPCC publication of past CO2 levels? After all, these values could be checked against direct instrumental measurements, thus telling us the accuracy of the ice core data. Is it because, as Dr. Jaworoski claims, that CO2 levels were already 20 to 40% low in glacier ice. The notion that today’s value for the concentration of co2 (~380 ppm), in our atmosphere, is at the highest point in recent history, maybe ever, demonstrates how well the IPCC has been able to bury scientific information that does not agree with its theory of man-made global warming. One needs to read Ernst-Georg Beck’s paper discussing the over 90,000 direct measurements of atmospheric CO2 levels, dating back to 1812, by the classical chemical method. Although the error of this method was rather high initially, by 1857, this methodology had improved to the point where the maximum error was 3%, later improving to 1 – 2% in the 20th century. In fact, during the last decade of use (ending in 1961), when the current modern instrumental method overlapped with this classical method for 5-10 years, the 2 methods agreed to within 5 ppm at ~330 ppm. Thus the co2 level obtained in the 1942 by the classical method of 450 ppm (using 5 year smoothing) should be considered real and falsifies the notion that CO2 levels are now setting records. This value, ~70 ppm higher than today, was produced by the substantial warming of the 20s and 30s combined with the effects of World War 2. In fact, the classical method shows that CO2 levels exceeded our current levels 3 times; reaching 450 ppm in 1822 due to the worst volcanic event (Tambora) in the last 2000 years, 390 ppm in 1852 due the initial warming in the 1840s and finally 450 ppm in 1942. Only once does the CO2 level drop below 300 ppm, 1885. Thus, CO2 levels have varied from 290 to 450 ppm in the last 200 years.

Your second error is to deny the importance of the oceans as a source of increase CO2; the oceans are the greatest reservoir of CO2 on earth, far exceeding air. It is a well known fact that cold water traps more CO2 than warmer water; just pour cold soda into a warm glass. The observed warming of our oceans has played a substantial role in the increase of CO2 in today’s atmosphere. Although the IPCC claims that man made CO2 accounts for 30% of today’s level CO2, Vincent Gray suggests that this level is below 5%; calculations made from to different scientific methods. Third, you seem to deny the importance of the fact that warming precedes CO2 rise by hundreds of years and the fall of temperature precedes the drop in CO2 levels. This again demonstrates the importance of the CO2 reservoir known as our oceans.

The theory of CO2 induced warming depends on how important black body radiation is to the removal of heat from the surface of earth. The IPCC claims that this radiation accounts for ~70% of heat removal budget. This conclusion necessitates ignoring a note on the greenhouse effect published by the noted physicist, R. W. Wood, 100 years ago. R. W. Wood demonstrated experimentally that conduction/convection removes ~25 times the heat from the land mass of earth than black body radiation; this experiment has been since repeated with the same result. The relative importance of conduction/convection should remain about the same outside the small greenhouse-like boxes used in his experiment. This ratio is even greater when one considers the observation that oceans are poorer radiators than land, but evaporation/conduction/convection over the 70% of our surface that is water is far stronger than conduction/convection on land. This is illustrated by how much hotter dry sand is at the shore compared to still damp sand. Therefore, to my thinking, convection is probably responsible for something like 97 – 98% of the of the heat removal budget from earth.

Black body radiation accounts for only about 2% of the heat removal budget from the surface of earth. CO2 is able to absorb only 8% of the latent heat (blackbody radiation) emitted by earth if no water vapor is present, 6% with water is present. Thus total CO2 will only affect 0.12% of the heat removed from earth. Man made CO2 (~5%) therefore affect only 0.006%. This explains why a hot spot in the top third of the troposphere centered over the equator has not been found. Thus, the trace gas, CO2, plays a diminishing small role in the regulation of temperature of earth’s surface and atmosphere. Our atmosphere has two roles to play in promoting life; the removal of enough heat from the surface to make life possible and to trap enough warmth in our atmosphere to promote life. Convection processes dominates surface regulation while water, in its various forms, along with the rate of convection, maintains the relative warmth of our atmosphere. It is not CO2 that traps heat. In the desert, where CO2 levels are comparable to elsewhere, but water vapor is lacking, we see rapid heating by solar radiation and rapid cooling when the sun sets.

To you, my belief might be extreme, but they are based on solid science. The IPCC relies on pseudo science often reaching the level of fraud. To them, cherry picking is an art, whether dealing with tree rings or ice cores. No other field allows such broad latitude in discarding data points. If a pharmaceutical firm where caught doing this, there would a billion dollars fine plus possible jail time. We are in the stone age in our understanding of the complex, four dimensional, chaotic system that is climate. Yet the IPCC, today, places its argument on the unsubstantiated virtual reality of play station climatology. It is only in this world that bias guesses, such as the unproven belief that water will amplify the small effect CO2 is put forth to scare people. Dr R Spencer has illustrated that clouds are a negative feedback, not the positive feedback suggested by modelers. We are expected to believe that a 1 degree rise in temperature in the next 100 years will cause an irreversible climb in temperature, when 7 to 10 degree rise, in a few decades, at the end of an ice age, does not cause it. Raising temperature 1 degree should, by them, result in a continuous, out of control loop that can not be stopped. This is an uncontrollable chain reaction; obviously this has never happened before although stresses in the past have been far greater.

P Bartner 3/20/09

Rather than waste my time arguing point for point, let me just say that this is the most poorly reasoned argument I’ve ever read on the topic. At least some folks just say what they mean, facts be damned. This article attempts to sound academic, but doesn’t take an academic to deduce what it’s central point is going to be by the second sentence. All of the ensuing blunderous misinterpretations of science and logic are just bonus after that. The complete lack of scientific insight is staggering and staggeringly obvious.

Crap. Period.

Chip,

Thanks for the response. After reading your responses to all, and without knowing anything else about you, you are definitely pro-AGW. Simply put, you come across as an “effect & cause” person — if it happens, then CO2 caused it.

I’m not sure if that is the impression you desire to achieve. If not, you might want to be more careful with your words and choose to sip out of the glass of humility every once in a while – other scientists with different viewpoints have excellent opinions also.

(FWIW, I’m sure you’re a smart person. I am also sure there are a lot of smarter individuals who would take issue with your CO2-centric tautology, though.)

I’ll keep reading but you’re not making a convincing case yet. (And please, no more balloon analogies. In fact, drop the analogies entirely – too simplistic.)

C3H Editor

One more thing:

“Unless we are at some magical concentration, such that increasing greenhouse gases no longer adds extra warming to the planet—which I have not seen anyone argue—then more greenhouse gases will lead to higher temperatures.”

This is just plain cluelessness.

The assertion that the effects of CO2 (natural or manmade) on temperature is logarithmic (a second order relationship of decreasing in effect with increasing concentrations), is widely held in scientific circles and widely promoted by the AGW debate. It is a simple case of statistical probablility upon which scientists from both sides of the debate agree.

Sorry nobody told you. You know, during the course of your exhaustive research. Why don’t you just leave the science to scientists, instead of playing Obama and pretending that well chosen words are can substitute for experience and understanding. You’ve clearly already insulted a few REAL scientists with this drivel. Must you continue this charade…

Chip,

Once again, a hilarious lack of understanding of causality vs similar trends. Though in this case neither is in your favor.

You said:

“despite what you would like to believe, rising CO2 concentrations produce rising temperatures.”

Check CO2 concentrations against temperature for the last decade, half century, and century and I’ll wait here for your retraction.

On none of these timescales is there observed a strong relationship between CO2 and Temperature. Nor would the laws of thermodynamics even allow it at the ppm levels we’re talking about. Its no secret that CO2 levels continued to rise through the 70’s even as we feared a new ice age was upon us. You can smooth your graphs or factor in your assumptions to explain this away, but that’s you and your assumptions, not any true causal relationship that can be called science.

The sadder thing is that this point has been made a million times previously. The causal link has not been found, a non-manipulated correlation does not exist and yet folks like you are still out there trying to sell it to anyone who doesn’t know better. So maybe you give us all a big smarty pants doublespeak explanation for why THAT is.

Otherwise just admit that you are just a hack treading the same ground as everyone instead of your current practice of giving “hats off” when someone calls out your true, yet purposely misrepresented beliefs. I stated in the POST YOU DELETED that your true colors were showing by the second sentence (remembering this?) and you were an alarmist in a rational person’s clothing. 25 posts (and a bunch of weak, pseudoscientific points) later, C3H Editor got you to admit it.

Shall we continue down this road?

Mr Knappenberger:

I cited one of our publications concerning the carbon cycle which proves the cause of recent rise in atmospheric carbon dioxide concentration cannot be known. You have replied saying:

“Human emissions are more than enough to account for the CO2 build-up. You need look no further.”

But that is nonsense! You could equally say that ‘Increased human urination is sufficient to explain sea level rise. You need look no further.’

Your balloon model is wrong because it assumes an invariant system and much of the carbon cycle (e.g. the biosphere) is not invariant.

And there is good reason to doubt the suggestion (e.g. by IPCC) that the steady base trend in recent rise to atmospheric carbon dioxide concentration is a result of accumulation of the anthropogenic emission of in the air. A rise related to the anthropogenic emission should vary with the anthropogenic emission, but the steady rise does not. The anthropogenic emissions vary too much for them to be a likely cause of the steady rise of 1.5 ppm/year in atmospheric CO2 concentration that is independent of a temperature effect.

Please note that the annual anthropogenic emissions data need not vary with the atmospheric rise. Some of the emissions may be accounted in adjacent years so 2-year smoothing of the emissions data is warranted. And different nations may account their years from different start months so 3-year smoothing of the data is justifiable. However, the 5-year smoothing applied by the IPCC to get agreement between the anthropogenic emissions and the rise is not justifiable (they use it because 2-year, 3-year and 4-year smoothings fail to provide the agreement).

So, let me give you a model that is based on empirical data instead of the supposition you present.

The model is that water which entered deep ocean during the Medieval Warm Period (MWP) is now returning to the ocean surface and is inducing release of oceanic CO2 in response to altered ocean pH. This release could be expected to provide the steady increase in atmospheric CO2 concentration (of 1.5 ppm/year) that is observed to be independent of temperature variations.

I am very sceptical of the ice core data because I think they indicate falsely low and very smoothed values for past atmospheric CO2 concentrations. However, I do think the ice cores indicate long-term changes to past atmospheric CO2 concentrations. And the ice cores indicate that changes to atmospheric CO2 concentration follow changes to temperature by ~800 years. If this is correct, then the atmospheric CO2 concentration should now be rising as a result of the Medieval Warm Period (MWP).

This begs the question as to the cause of the ~800 year lag of atmospheric CO2 concentration after changes to temperature indicated by ice cores. And I suggest it is an effect of the thermohaline circulation.

The water now returning to the surface layer entered the deep ocean ~800 years ago during the MWP. Therefore, a release of oceanic CO2 in response to altered pH would concur with the ice core indications (assuming my acceptance of long-term trends in ice core data is correct).

Several studies have shown that the recent rise in atmospheric CO2 concentration varies around a base trend of 1.5 ppm/year. A decade ago Calder showed that the variations around the trend correlate to variations in mean global temperature (MGT): he called this his ‘CO2 thermometer’. Now, Ahlbeck has submitted a paper for publication that finds the same using recent data. Reasons for this ‘CO2 thermometer’ are not known but they probably result from changes to sea surface temperature.

So, there is strong evidence that MGT governs variations in the recent rise in atmospheric CO2 concentration but there is no clear evidence of the cause of the steady – and unwavering – base trend of 1.5 ppm/year.

Determination of cause and effect relationships is a severe problem when attempting to evaluate every aspect of the anthropogenic global warming (AGW) hypothesis.

It is often claimed that ‘ocean acidification’ (i.e. change to the pH of the ocean surface layer that is reducing the alkalinity of the surface layer) is happening as a result of increased atmospheric CO2 concentration. However, I have repeatedly pointed out that the opposite is also possible because the deep ocean waters now returning to ocean surface could be altering the pH of the ocean surface layer with resulting release of CO2 from the ocean surface layer. Indeed, no actual release is needed because massive CO2 exchange occurs between the air and ocean surface each year and the changed pH would inhibit re-sequestration of the CO2 naturally released from ocean surface.

Ocean pH varies from about 7.90 to 8.20 at different geographical locations but along coasts there are much larger variations from 7.3 inside deep estuaries to 8.6 in productive coastal plankton blooms and 9.5 in tide pools. The pH is lowest in the most productive oceanic regions where upwellings of water from deep ocean occur.

It is thought that the average pH of the oceans decreased from 8.25 to 8.14 since the start of the industrial revolution (Jacobson M Z, 2005). And it should be noted that a decrease of pH from 8.2 to 8.1 corresponds with an increase of the CO2 in the air from 285.360 ppmv to 360.000 ppmv at solution equilibrium between air and ocean (calculations not published).

In other words, the ocean ‘acidification’ (estimated by Jacobson) is consistent with the change to atmospheric CO2 concentration for the estimated change to the solution equilibrium between air and ocean.

Also, the spatial distribution of 13C isotope changes in the atmosphere indicates that the source of those changes has an oceanic cause. As Quirk says, “The annual variations in latitude can be seen by looking at the profile of each year against its mean value as shown below. This indicates that the major source of the 13C isotope depletion comes from the far Northern Hemisphere”; i.e. to the north of human habitation and industrial activity.

The fact of lowest ocean pH at positions of oceanic upwelling is a strong indication that the reduced upwelling is lowering the pH of the oceans. And the fact that observed oceanic pH change corresponds to the change to solution equilibrium between air and ocean indicates that re-sequestration of the CO2 naturally released from ocean surface has caused the observed change to atmospheric carbon dioxide concentration.

Hence, there is a coherent argument supported by empirical data that water now returning to the surface having entered deep ocean during the MWP may be inducing release of oceanic CO2 in response to altered pH, and this release could be expected to provide the steady increase in atmospheric CO2 concentration (of 1.5 ppm/year) that is observed to be independent of temperature variations.

Is that the true explanation? Possibly, but not certainly. There are other possibilities that fit the evidence, too.

Richard

[…] Part I in an Occasional Series Challenging Ultra-skeptic Climate Claims (Chip Knappenberger) […]

[…] cknappenberger put an intriguing blog post on Part I in an Occasional Series Challenging Ultra-skeptic Climate …Here’s a quick excerptI suppose that I am an Ultra-skeptic according to your definition because I do not know what if any proportion of the recent rise in atmospheric carbon dioxide concentration is anthropogenic. But I would like to know. … […]

Derek, let’s improve the tone please–Chip is being very nice in posting your comments in full and answering them patiently.

If the ultra-skeptics are as ugly as the super-alarmists, then it is a sad day–and indicative that emotions have trumped science.

BTW, I refer you to my booklet for the Institute of Economic Affairs, Climate Alarmism Reconsidered (2003) for your possible interest. It can be read online here: http://www.iea.org.uk/record.jsp?type=book&ID=218

Thank you

Very simple observations are possible regardless of the state of atmospheric CO2 levels and their causes: warming has occurred when CO2 levels increased, and cooling occurred after CO2 was elevated. According to the Vostok samples, didn’t cooling always precede CO2 levels decreasing? In fact, just in the century past there was warming prior to 1940, followed by cooling until 1970 as CO2 steadily increased, warming again to 1998, and now a decade with increasing levels of atmospheric CO2 and some cooling. The analyses of CO2 levels and sources certainly advances knowledge of CO2, but not of its effects on climate change.

The Holocene Climate Optimum exhibited higher global temperatures, and the Medieval Warm Period, as evidenced by human habitation and crops, was warmer than the present. A Harvard/Smithsonian study by Soon et al of over 240 climate studies proved that the Medieval Warm Period was global and of roughly 500 years duration. What makes the current warming, which began at the end of the Little Ice Age a century before CO2 began its steady rise, different from the thousands of natural climate changes that preceded it?

The current highly detailed analyses of atmospheric CO2 levels seem to exist devoid of the reality of past and present climate change. Outstanding methods are being used to arrive at mediocre results.

[…] climate science and climate observations to “skeptics” and “alarmists.” Knappenberger’s beginning post in a series questioning “ultra-skepticism,” for example, has attracted critical attention (and […]

“Just because climate models may not get it right doesn’t mean that it is not happening.”

No, but since the climate models form the ONLY basis for such a conclusion, it means that if it’s happening, there is at this point NO evidence for it.

“Unless we are at some magical concentration, such that increasing greenhouse gases no longer adds extra warming to the planet—which I have not seen anyone argue—then more greenhouse gases will lead to higher temperatures.”

In fact, there is such a “magic” number. The contribution of CO2 to the greenhouse effect, in theory, is based on its ability to absorb and reradiate energy outside of the band of other gasses, most importantly water vapor. But there is a saturation phenomenon; the sun only emits so much energy in each band, and once ALL of that energy is being absorbed and reradiated, further increases have no effect. That’s one reason why the effect of additional CO2 is logarithmic. We are very nearly at saturation now, which explains why fauna and flora flourished under concentrations of CO2 ten times the current level.

[…] Part I in an Occasional Series Challenging Ultra-skeptic Climate Claims (Chip Knappenberger) […]

I think Deredk D has already admitted that he doesnt have the scientific background to fully understand the body of climate research which has already been published…

And name calling is what characterises the behaviour of the Gore global warming extremists, since they have little grasp of the science they ultimately resort to name calling in a attempt to shut down the reasoned and accurate argument which doesnt support their theories or agenda….

Ultimatly, there is NO supporting evidence for the extreme changes predicted by the Alarmists…..and ulitmately that any extreme measures taken to allegedly reverse climate change (the new nomenclature recently adopted obviously to further decieve citizens about their intentions) would have little or no effect on changing the course of Earths climate over the near to mid-term.

If they want to cool the earth quickly , try setting off a 1000 10 megaton nuclear events simultanoeusly, I believe the amount debris thrown into the atmosphere will cool the Earth for a few decades anyway; Or, wait for the next MegaVolcano or Mega Asteroid

Political momentum and an estimated $100 billion annual graft for climate change campaigners and “green” projects, obviates the truth or falsehood of anthropogenic global warming (AGW). Science has become irrelevant.

Personally, I am in favor of global warming for several reasons. Energy production (oil, gas, coal, nuclear) cannot keep pace with world population growth or increased G-20 consumption. Putting aside the political impediments, it remains that future energy production will decline. Warm is good. Crops will thrive. It will take less energy to keep people from freezing to death in winter.

Likewise I believe it is beneficial to scrap our seaboard cities and rebuild Western civilization. There is no eternal right to stagnate.

[…] The Validity of Man-made Atmospheric CO2 Buildup (Part I in an occasional series challenging 'u… Quote: […]

Well, this article was written long before Climategate, and several other commenters have already pointed out errors.

I fail to see how CO2 impacts climate. CO2 lags behind temperature changes; it is not the cause of it. Nevertheless, CO2 isn’t pollution. There have been eras in Earth’s history where CO2 exceeded 7,000 ppm, and this was before humans. Climate is so complex that we do not know all of the natural variables. Any impact we have is drowned out by those variables.

More evidence has come out and it seems to be on the Ultra-Skeptic side.

I know this article is old, but it seems to be in the alarmists’ favour :/

I’m going through the MasterResource archives from Day One. Chip, this is an excellent article and I admire your responses in the comments thread too. If anyone asks me for an example of a dog whistle story I will send them here because you definitely got the attention of the People of Walmart!

Alan Turing famously said that some processes were not computable because they could not be compressed into an algorithm. The only realistic model of the Universe was the Universe itself, he said. I’m beginning to wonder if the climate system is also too complex to be modeled.

Nevertheless we can calculate planetary temperatures from known, well-established science. This kind of elementary model is well supported by classical thermodynamics.

Salby has now shown that the ice core data *interpretation* is wrong.

Ivan,

Let’s not get ahead of ourselves. My article is primarily pointing out the lack of scientific support for the notion that the observed build-up of atmospheric concentrations of carbon dioxide is not primarily from human activities. And yes, there are plenty of people who hold this notion.

As far as whether the increased concentrations of carbon dioxide has caused some (most) of the warming since the late 1970s, that is a different topic. But, as I alluded to, I believe it has. But, a thorough discussion of that topic will have to wait until I write up that article. Just because climate models may not get it right doesn’t mean that it is not happening.

Also, for an overview of Roy Spencer’s current work on the causes of global warming, his presentation at the conference last week is on-line (please take note of his slide 16, especially since the late 1970s).

-Chip

Ivan,

Thanks for the thoughtful discussion.

I guess what I have is that the greenhouse effect is responsible for about 33ºC of warming of the earth. Unless we are at some magical concentration, such that increasing greenhouse gases no longer adds extra warming to the planet—which I have not seen anyone argue—then more greenhouse gases will lead to higher temperatures. How high, is the million dollar question. Roy is doing good work trying to answer that question, as are many others, but I have never seen any valid scientifically backed argument that shows that the greenhouse effect maxed out at ~280ppm. Thus, I expect growing greenhouse gas concentrations lead to higher temperatures—a phenomenon that has been observed. Natural variations obviously act on top of this, but they don’t, nor should we expect them to, explain the majority of the planet’s warming over the past 30 years or so (whatever that amount is). At least that is my very strong opinion. Of course, what happens on the planetary scale does not readily translate to the local and/or regional scales.

-Chip

John,

I believe that every indication of large-scale temperature yet developed (thermometer records, receding glaciers, species shifts, oceanic heat content (past half-century), proxy collections) shows that temperatures have increased over the past century. I didn’t realize that some folks were contending otherwise. Perhaps I should add another topic to my list of fallacies to be covered!

And strangely, the wide CO2 swings identified in the past by Beck (using less than ideal measurements) are not observed with more refined measurements made during the past 50 years—which instead, show a pretty controlled year-to-year variability. Apart from trying to explain how such large short-term swings detailed by Beck could occur, and that there is little indication of there existence in other records, the case would be stronger if there were any indications that such variability actually occurred in well-sited and well controlled experiments such as the Mauna Loa (and numerous other) atmospheric CO2 records. But it doesn’t.

-Chip

Jae,

I hope you were being facetious. But, for anyone interested in an explanation of why I am certain that “greenhouse gases” cause warming, I encourage you to head down to your local college bookstore and peruse any textbook on meteorology or climate. This is not any issue of contention.

-Chip

C3H Editor,

The determination of the climate’s sensitivity is certainly, in my opinion, one of the most important and pressing fields of investigation, and many people are working on it, and have been for some time—it turns out that it does not give itself up very easily. I tend to think that it is closer to the low of the range of estimates than the higher. And yes, the logarithmic nature of the response to greenhouse gases in certainly and important part of the determination.

I don’t profess to know exactly how much the average surface temperature of the earth has warmed during any particular period (obviously point temperature measurements are subject to a variety of influences unrelated to “global” forcings), or what the precise percentage of the observed warming in recent decades in “natural.” When you start messing with fundamental characteristics of the climate systems (such as increasing the loading of greenhouse gases in the atmosphere), the distinction between “natural” and “man-made” becomes a bit fuzzy. But, I do think that the major part of the temperature increase in at least the past 30 years is from human enhancement of the earth’s greenhouse effect. There is little else to explain it. Presumably, if we were around and instrumenting the earth back during previous warming and coolings, we would have been able to determine its causes, or at least develop a good idea as to what they may have been.

-Chip

Derek,

Thanks for your comment.

In my article I point out that indeed, there are some folks going around “saying what they mean, facts be damned.”

I laid out the facts. Apparently, you have chosen to look elsewhere in coming to your own conclusions.

Yes, the forcing response to increase greenhouse gases concentrations logarithmic as I have written about in the scientific literature (see Michaels, Knappenberger, Davis, and Frauenfeld, 2002. Revised 21st century temperature projections, Climate Research, vol. 23, pp. 1-9). I pointed this out in an earlier comment. It is just that we are not at the flat portion of the response yet. So, despite what you would like to believe, rising CO2 concentrations produce rising temperatures.

-Chip

C3H Editor,

Thanks for your advice and hats-off to your sleuthing abilities. Perhaps I should have stated up front that I believe that the best science shows that human activities, primarily among them the burning of fossil fuels, is leading to enhanced atmospheric greenhouse gas concentrations and rising global average surface temperatures. And I am sure that there are many people much “smarter” than me working on the issue—just that few, I can only hope, who have spent time studying the issue hold the notion that human carbon dioxide emissions are not responsible for a vast majority of the rising atmospheric CO2 concentration—otherwise, that sort of argues against the first part of this statement. :^)

-Chip

Derek,

No intention of deleting your comment. It should be posted. As you can see from this thread, I have been open to all comments, including personal ones. However, I must admit to growing weary of them…so I wouldn’t press your luck.

As far as my comment to C3H Editor about good sleuthing, I was being facetious. I have always pointed to a human influence on climate change. See for example Michaels, Balling, Knappenberger and Davis, 2000. Observed warming in cold anticyclones. Climate Research, 14, 1-6. So, my views have never been hidden.

I really don’t know where else to go with you about the influence of CO2 on the climate. Just pick up any/every meteorology/climatology textbook and read about it. Hopefully, our readers who are interested can do their own research, starting from the points that you and I and other commentors have made, and investigate the topic for themselves. I would encourage them to do so, as I am sure you would as well.

-Chip