Global Lukewarming: A Great Intellectual Year in 2011

By Chip Knappenberger -- January 19, 2012“Mounting evidence [of lukewarming] begins to start to make you wonder whether there is some fundamental problem between climate models and reality.”“To me, the most significant thing that the Climategate emails show is that the deck is stacked against the publication of research results that are critical of the established scientific consensus, and the skids are greased for papers that run in support…. Not a good situation for the advancement of science.”

“Lukewarmers” are those scientists (and others) who believe the balance of evidence is middling between “climate alarmists” (who tend to think that the global temperature rise will lie in, or even exceed, the upper half the IPCC’s 1.1°C–6.4°C range of projected temperature rise this century) and ultraskeptics, or “flatliners” (who tend to think that the addition of human-generated carbon dioxide has virtually no impact on global temperatures).

Lukewarmers have found the world to be a lonely place. But favor (think physical processes of global climate) smiled for us in 2011. Several scientific studies produced results, when considered in combination, provide evidence that the general warming of the earth’s climate is proceeding at a rate that lies in the lower half of the IPCC’s projected temperature change during the 21st century.

And with a low-end temperature rise comes along low-end impacts. Seemingly good news for all!

2011 Temperatures

First, let’s review the global average temperature, both at the surface, and in the lower atmosphere since 1979—the year that satellite observations of the temperature from the lower atmosphere become reliably available, and pretty near the beginning of the second warming episode of the 20th century.

Fig. 1 shows the temperature data from one surface dataset (from the U.S. National Oceanographic and Atmospheric Administration) and one satellite dataset of observations from the lower atmosphere (from the University of Alabama in Huntsville). There are other data compilations besides these two, but they are somewhat similar and the differences are not what I am interested in discussing here (although it is by no means an uninteresting topic).

Figure 1. Annual average global temperature anomalies from the surface (red) and from the lower atmosphere (blue), 1979-2011 (the value for 2011 in the surface record is based on only 11 months of data).

It is pretty obvious that the global temperature in 2011 have done little to hasten the observed temperature increase, but rather has acted to further ensconce the established trend (if not add a tiny bit of downward pressure on it). As the trend in global temperature rise continues to be rather low, the amount of scientific scrutiny it is subject to grows, for it pushes at the envelope of our understanding of climate change and variability under rapidly increasing anthropogenic greenhouse gas emissions.

Observed Trend Less Than Climate Model Simulations

A prominent paper examining the issue was published in 2011 by Dr. Benjamin Santer and a long list of colleagues including some of the bigger names in climate science. These researchers set out to see just how unusual the rather low warming rate in the lower atmosphere is when compared with climate model expectations of the evolution of the temperatures when run with a combination of the observed (through the year ~2000) and projected (through 2010: from the IPCC’s SRES A1B scenario) anthropogenic enhancements to the atmosphere’s chemical and physical composition.

What the researchers found was that the observed temperature trends calculated from periods ranging from 10 to 32 years all lie below the average trend of the same length projected by a large family of climate models (Fig. 2). Each climate model includes some representation of some of the processes which lead to “natural” (random) variability (processes such as El Niño/La Niña, volcanic eruptions, solar variability—note that not all models include all of these processes throughout the entire 1979-2010 period of study). As the time period over which the temperature trend is calculated increases, the impact of natural variability on the magnitude (and even the sign) of the trend decreases as the short-term temperature deviations caused by random variability tend to cancel out.

Therefore, the envelope of model expected trends shrinks as the period of time over which the trend is determined expands (yellow area in Fig. 2). What this means is that the observed trends in this figure begin to become much more unusual compared with model expectations as the trend lengthens.

By the time you get to trend lengths of 30 or so years, the observed trend is threatening the lower limit of the 95% confidence range of climate model expectations. Such mounting evidence begins to start to make you wonder whether there is some fundamental problem between climate models and reality.

Figure 2. A comparison between modeled and observed trends in the average temperature of the lower atmosphere, for periods ranging from 10 to 32 years (during the period 1979 through 2010). The yellow is the 5-95 percentile range of individual model projections, the green is the model average, the red and blue are the average of the observations, as compiled by Remote Sensing Systems and University of Alabama in Huntsville respectively (adapted from Santer et al., 2011).

Over the full record (1979-2010) the real world has only warmed about two-thirds as much as models indicate that it should have. If this continues to the end of the century, the IPCC’s 21st century warming range of 1.1°C to 6.4°C becomes about 0.75°C to 4.25°C —with a central value of 2.5°C. But what’s worse is that a model/observation disparity could indicate that the climate models are not faithfully reproducing reality, which would mean that they are not particularly valuable as predictive tools.

My conclusion (which, is different from that of the authors) based upon the research presented by Santer et al.—that the models are on the verge of failing—is further strengthened by the results of another paper published in 2011 by Foster and Rahmstorf.

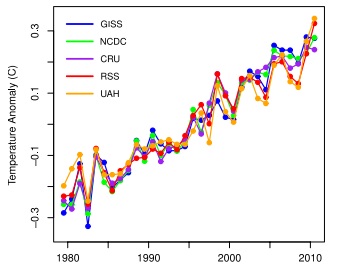

These researchers sought to identify the magnitude of the natural signals present in the observed trends of surface and lower atmospheric temperatures and to see whether the recent slowdown in the rate of global average temperature rise could be explained by the combination of the timing of natural influences (again, solar, El Niño/La Niña, volcanoes). Ultimately, they concluded that, in fact, it could be. And when the natural signals were removed from the global temperature record, global warming was alive and well and proceeding at a remarkable steady rate since the beginning of their period of study, 1979-2010 (Fig. 3).

According to the authors “[t]here is no indication of any slowdown or acceleration of global warming, beyond the variability induced by these known natural factors.”

Figure 3. Global temperatures (from various compilations of the surface (GISS, NCDC, CRU) and the lower atmosphere (RSS, UAH)) after the natural signals have been removed, 1979-2010 (from Foster and Rahmstorf, 2011).

What makes the Foster and Rahmstorf work particularly encouraging for lukewarmers is that the authors find that for periods of 30 years or so, the removal of natural variability makes little difference on the magnitude of the observed trend in the lower atmosphere.

However, thinking back upon the results from Santer et al., the same is probably is not entirely true for all of the climate model runs for the 1979-2010 time period. Almost certainly, the combination of random variability has added some amount of noise to the trend distribution even at time frames of 30 years or so.

What this means, is that if the modeled temperatures were also stripped of their natural variability, then the 95% range of uncertainty (the yellow area depicted in Fig. 2) would contract inwards towards the model mean (green line). The net effect of which would be to make the observed trends (red and blue lines in Fig. 2) over the past 30 years or so lie even closer to (if not completely outside of) the lower bound of the 95% confidence range from the model simulations. Such a result further weakens our confidence in the models and further strengthens our confidence that future warming may well proceed at a modest rate, somewhat similar to that characteristic of the last three decades.

Climate Sensitivity

Another popular lukewarmer paper in 2011 was published by a research team led by Andreas Schmittner from Oregon State University and concerned an estimate of the earth’s climate sensitivity (and the uncertainty about that estimate) derived from some recently published determinations of land and sea surface temperatures during the Last Ice Age based on a collection of climate proxies.

Schmittner and colleagues found that when using these newly available proxies from both land and ocean areas, not only was their central estimate of climate sensitivity (how much the earth’s temperature will change with a doubling of the atmospheric concentration of carbon dioxide) a bit lower than the IPCC central estimate, but more importantly, Schmittner et al.’s determination of the uncertainty about their estimate virtually rules out climate sensitivities above 6°C. This finding is in stark contrast to the IPCC entertaining the possibility of a “fat right-hand tail” to the distribution of potential values including climate sensitivity values as high as 10°C or greater. In their Abstract, Schmittner et al. summarized their findings:

Assessing impacts of future anthropogenic carbon emissions is currently impeded by uncertainties in our knowledge of equilibrium climate sensitivity to atmospheric carbon dioxide doubling. Previous studies suggest 3 K as best estimate, 2–4.5 K as the 66% probability range, and non-zero probabilities for much higher values, the latter implying a small but significant chance of high-impact climate changes that would be difficult to avoid. Here, combining extensive sea and land surface temperature reconstructions from the Last Glacial Maximum with climate model simulations we estimate a lower median (2.3 K) and reduced uncertainty (1.7–2.6 K 66% probability). Assuming paleoclimatic constraints apply to the future as predicted by our model, these results imply lower probability of imminent extreme climatic change than previously thought.

Anther paper with a warm reception from the lukewarmers was published by Gillett et al. (in actuality, this paper was published during the first week of 2012, but was accepted for publication in late 2011, so I’ll go ahead and include it here). Gillett and colleagues used the character and evolution of the global average temperature from 1851 through 2010 to bend the output of a climate model to best fit reality (or at least reality as captured by the Hadley Centre/Climate Research Unit global average temperature compilation).

In doing so, Gillett and colleagues concluded that the temperature rise over the course of the 21st century is probably going to be considerably less than their raw climate model projections suggest. In fact, they write in their paper titled “Improved constraints on 21st-century warming derived using 160 years of temperature observations” that:

“Our analysis also leads to a relatively low and tightly-constrained estimate of Transient Climate Response of 1.3–1.8°C, and relatively low projections of 21st-century warming under the Representative Concentration Pathways.”

Basically, they found that when their climate model is constrained by reality over the past 160 years, that the temperature projections for the end of the 21st century are reduced by about 33%. This is a result of a similar magnitude as determined by Santer et al.

2011 (Dis)Honorable Mention

I would be remiss is my review of the climate stories of 2011 if I failed to mention the release of another round of Climategate emails. The so-called Climategate 2.0 emails further many of the storylines that ran throughout the original Climategate releases back in November 2009—rampant gatekeeping, data hoarding, and general misbehavior.

To me, the most significant thing that the Climategate emails show is that the deck is stacked against the publication of research results that are critical of the established scientific consensus and that the skids are greased for papers that run in support. It is little wonder why the literature is as one-sided as it is on the issue. The folks who are responsible for establishing the consensus have also taken it upon themselves to be the protectors of it. Not a good situation for the advancement of science.

All of which goes double to show that the papers which do make it through to publication and which chink away at the icon of alarming climate change quite likely are actually on to something.

Bottom Line

So what I have documented is a collection of observations and analyses that together is telling a story of relatively modest climate changes to come. Not that temperatures won’t rise at all over the course of this century, but rather than our climate becoming extremely toasty, it looks like we’ll have to settle (thankfully) for it becoming only lukewarm.

My guess is that 2012 will hold more good news for lukewarmers, both in terms of supportive scientific findings, and also in a migration of folks towards the middle of this issue. As being lukewarm becomes a bit more comfortable, I imagine that more folks will join the happy middle–and maybe my lunch calendar will start to fill up!

References:

Foster, G., and S. Rahmstorf, 2011. Global temperature evolution 1979-2010. Environmental Research Letters, 6, 044022, doi:10.1088/1748-9326/6/4/044022

Gillett, N.P., et al., 2012. Improved constraints on 21st-century warming derived using 160 years of temperature observations. Geophysical Research Letters, 39, L01704, doi:10.1029/2011GL050226.

Santer, B.D., et al., 2011. Separating Signal and Noise in Atmospheric Temperature Changes: The Importance of Timescale. Journal of Geophysical Research, doi:10.1029/2011JD016263.

Schmittner, A., et al., 2011. Climate sensitivity estimated from temperature reconstructions of the Last Glacial Maximum. Science, 334, 1385-1388, doi:10.1126/science.1203513 .

The real question is not that climate changes nor that there has been a slight warming since the little ice age. It is what is causing the changes and increase in temperature? That there has been a slight warming, by itself, does not reveal causation.

The catastrophic anthropogenic global warming alarmists insist it is due to man’s addition of CO2 to the atmosphere by his burning of fossil fuels. Their fix is to stop the use of fuels, stop the industrial revolution, and stop the lives of 95+% of the living humans on earth. I find THIS claim and their apparent getting away with it genuinely alarming. Chicken Little had more evidence that the sky was falling than the alarmists have for their claim. After all, an acorn actually fell on her head.

All they have are speculations based upon computer simulations that assume an increasing CO2 level is principle cause of warming. This is at minimum the logical fallacy of assuming what you are trying to prove. Further, by your presented evidence, the simulations fail miserably to follow the behavior of the real world.

Yes, they can point to an actual increasing temperature and to an acutal increasing level of CO2 but that is ALL they have – a correlation. They presume CO2 drives temperature without having a proven mechanism for that driving. Yet anyone who has left a cold soda to warm knows it goes flat when it warms. That means temperature drives CO2 out of solution. Since the world’s surface is mostly water and that water contains 50 times the CO2 of the atmosphere, any warming of the ocean will increase the level of CO2 in the atmosphere.

The fact remains, there is yet to be developed any observational evidence demonstrating man’s trace addition to a trace level of CO2 (~ 3% of ~0.04%) in the atmosphere has any real world effect beyond providing much needed food for the plants of the world for which the Hungary peoples of the world should be grateful.

The bottom line of all of this is the stopping of the burning of fossil fuel will have NO measurable impact upon climate but will have a devastating impact upon the global economy and the lives of very real alive people.

You can display the “Mission Accomplished” banner on the superstructure of the battleship “Lukewarm”, but you can’t make it true. There is no greenhouse effect, of increasing atmospheric temperature with increasing atmospheric carbon dioxide, the simple fact both alarmists and lukewarmists will have to face in the end.

Chip,

I recommend you not hold your breath waiting for luncheon invitations from Jim Hansen, Gavin Schmidt, Tom Wigley, Keith Briffa, Mike Mann, Grant Foster, Joe Romm, Ed Markey, Henry Waxman or George Soros. 🙂

Ed,

Perhaps nor Lionell Griffith or Harry Dale Huffman?

(FWIW, Mike and I have had lunch/dinner on several occasions)

-Chip

Lionell,

There is conclusive proof from satellite data that increases CO2 will cause increases in temperature. [Check out any of the links that follow my comment.] The debate right now revolves around the following questions: (a) what are the negative and positive feedback mechanisms…this determines how much amplification there is in addition to the effect solely of CO2 levels; and b) what is the economic impact of living in a world with increased global average temperature.

These are the questions we should be focusing on. There is firm evidence (not just on Earth) that increased CO2 levels will cause increased temperatures. There is no debating this. It’s like debating whether the Sun goes round the Earth or vice versa. It’s a waste of time and a waste of energy to debate this.

On the hand, this article does a good job of addressing Question (a). After accounting for positive and negative feedback, what effect will an increase in CO2 levels have on the global average temperature? This is a really important question to answer, and hopefully, we are narrowing down the uncertainty.

Once we narrow down the uncertainty, then we can build improved economic models to estimate how much economic benefit or damage will occur from a given temperature rise, and hence from a given level of CO2 in the atmosphere.

We are still a long way off from having an answer to question (b) with any real certainty. It is worrisome that some countries have already started adopting taxes on CO2 even before they have a real answer to question (b).

But there is a difference between having an open mind regarding what is the answer to question (b), and re-hashing debunked arguments, as Lionell did above.

Chip,

Thanks for the references at the end of your article.

It’s too bad that there aren’t more people like you who actually interested in answering question (a) and more people like Lomborg/Tol/Nordhaus, who are interested in answering question (b).

Best,

Eddie

http://www.eumetsat.int/Home/Main/Publications/Conference_and_Workshop_Proceedings/groups/cps/documents/document/pdf_conf_p50_s9_01_harries_v.pdf

http://www.nature.com/nature/journal/v410/n6826/abs/410355a0.html

Eddie,

Sorry, no. They analyzed wavelength spectra intensities rather than total energy flow. They are very different critters. They assert that a change in spectra implies a change in temperature. Yes, they can detect a change in CO2 concentration from the change in spectra. They assert that because the CO2 concentration changed, the temperature changed. So what? Again, they are assuming what they are trying to prove.

I am not buying your argument nor am I willing to invite you to lunch. You are clearly a True Believer. There is no point in any more discussion.

Note how Knappenberger elects to misrepresent not only the science but the scientists’ position on AGW– none of the papers unequivocally support assertions/opinions that warming down the road will be “lukewarm” (a vague term at best, so it is difficult to make a quantitative analysis, I guess it can mean whatever the person using it wants it to mean).

Specifically, to my knowledge Santer, Urban, Foster, Rahmstorf, Solomon, Mears, Wigley, Meehl, Stott, and Thorne (amongst the other authors of the papers cited) are all very much concerned about the impacts of doubling or trebling CO2 and it is my understanding that they do not advocate continuing with business as usual as advocated by WCR. So that makes the narrative of the Knappenberger opinion piece especially disingenuous. I am confident that some authors would take strong exception to Knappenberger misrepresenting their work and trying to use it to push a “lukewarm” agenda/narrative.

Not only that, but any evidence that has been published the past year or so that showed model predictions are consistent with observations when one takes the inherent uncertainties into account or notes that there are still outstanding issues with the satellite tropospheric temperature estimates (e.g., Thorne et al. (2011a), Thorne et al. (2011b), Mears et al. (2011)) or papers that are consistent with the climate sensitivity reported in the IPCC’s 4th assessment (e.g., Park and Royer (2011), Pagani et al. (2010), Previdi et al. (2011), Kiehl (2011)) were simply ignored in the Knappenberger piece.

The opinion piece in question is a very clear attempt to bias and mislead people by ignoring the body of evidence. I should not be surprised, as their behavior is consistent with that reported at SkepticalScience. In fact, Senator Waxman in 2011 called for Patrick Michaels (who like Knappenberger also works for World Climate Report) to be investigated for misleading Congress.

Lest anyone still thinks that Mr. Knappenberger is an honest broker, please read this:

http://www.skepticalscience.com/patrick-michaels-serial-deleter-of-inconvenient-data.html

And this,

http://deepclimate.org/2010/06/06/michaels-and-knappenbergers-world-climate-report-no-warming-whatsoever-over-the-past-decade/

You might also want to be truly skeptical an look into his background:

http://www.sourcewatch.org/index.php?title=Chip_Knappenberger

Albatross,

Thanks for stopping by.

As you mention, this is an opinion piece, in which I walk through my interpretation of data and analyses which appeared in the scientific literature in the last year or so. I freely admitted in my piece, that my interpretation of some of the presented results differs from that of the original authors of the paper. I think you’ll be among the first to admit that one is not obligated to agree with all the conclusions you encounter (in the scientific literature or elsewhere).

Do you disagree that the over the past 30 years or so, the trend in the satellite observations of the lower troposphere temperature trends fall below model mean of the climate model expectations for that behavior? Am I misunderstanding the Figure from Santer et al. that I showed? Do you think that if natural variability was removed from the model runs that the envelope of variability would stay the same? Or would it shrink a bit as I surmised?

I included the complete abstract of Schmittner et al. And the result of Gillett et al. is pretty straightforward to understand.

So, it is not clear to me how I could be as far off the mark as you suggest.

And a word to the wise, I said over that Skeptical Science that our comments here are open to those commenters that are modestly well-behaved, and your are toeing that line—I am not sure what Pat Michaels or World Climate Report have to do with the topic of my article.

-Chip

Chip, you are entitled to you own opinions, but not your own facts. And you are most certainly not entitled to misrepresent and and distort other scientsits’ findings to fit your own narrative/agenda.

You can choose to ignore inconvenient papers and facts and caveats (as you did with both Gillett et al and Schmittner et al.), but that only underscores your bias. Now you are welcome to delude yourself (you are quite good at that), but I and others take exception when you try and impart that on others in public.

You saying this is intriguing:

“…my interpretation of some of the presented results differs from that of the original authors of the paper.”

That is because you have a skewed perspective (i.e., bias) and seek out hose interpretations or points that affirm your biases.

Ignoring me calling you on ignoring those papers that do not fit with your narrative is not going to make those papers go away or the fact that you ignored them. Or are you going to try and claim ignorance and that you are not up to date with the literature? Problem is, you citing those recent papers in your post demonstrates that you do in fact keep up to date with the literature.

Now I’ll leave you in peace. I have better things to do with my time thanks. Have a lovely evening and goodbye.

PS: From Santer et al.(2011),

“There is no timescale on which observed trends are

statistically unusual (at the 5% level or better) relative to the multimodel sampling distribution of forced TLT trends. We conclude from this result that there is no inconsistency between observed near-global TLT trends (in the 10- to 32-year range

examined here) and model estimates of the response to anthropogenic forcing.”

Oops, you somehow “forgot” that bit and somehow inverted reality to conclude:

“Such mounting evidence begins to start to make you wonder whether there is some fundamental problem between climate models and reality.”

And as for the models running warm, you also forgot to inform your readers of that the satellite estimates probably have an unresolved cool bias, again from Santer et al. (2011):

“Given the considerable technical challenges involved in adjusting satellite-based estimates of TLT changes for inhomogeneities [Mears et al., 2006, 2011b], a residual cool bias in the observations cannot be ruled out, and may also contribute to the offset between the model and observed average TLT trends.”

And you also ignored this:

“Possible explanations for these results include the neglect of negative forcings in many of the CMIP-3 simulations of forced climate change (see Supporting Material, Table S1), omission of recent temporal changes in solar and volcanic forcing [Wigley, 2010; Kaufmann et al., 2011; Vernier et al., 2011; Solomon et al., 2011], forcing discontinuities at the ‘splice points’ between CMIP-3 simulations of 20th and 21st century climate change [Arblaster et al., 2011], model response errors, residual

observational errors [Mears et al., 2011b], and an unusual manifestation of natural internal variability in the observations (see Figure 7A).”

So yet more evidence of your bias and propensity to misrepresent scientists’ findings and not share important caveats with your readers.

On last thought, actually a thought from Dr. Matt Huber (Purdue University):

“‘Climate scientists don’t often talk about such grim long-term forecasts, Huber says, in part because skeptics, exaggerating scientific uncertainties, are always accusing them of alarmism. “We’ve basically been trying to edit ourselves”, Huber says. “Whenever we we see something really bad, we tend to hold off. The middle ground is actually worse than people think.

“If we continue down this road, there are really is no uncertainty. We’re headed for the Eocence. And we know what that’s like.”

Dr. Matt Huber, October 2011. http://ngm.nationalgeographic.com/2011/10/hothouse-earth/kunzig-text/2

Albatross, The advocacy by authors of ending BAU is not a valid argument for the restriction of their data, anyone can use it for whatever purpose they want. if the authors are being misrepresented then that is what you should show: are they misquoted, is the data altered, etc.

Albatross,

I presented the facts from the papers and then gave my interpretation of them—just as you suggested.

As far as caveats go, sure, every paper contains caveats, but if the caveats were such to overwhelm the main findings of the paper then the papers would have never been written up or published in the first place. This is not to say that there is never something important lurking in the caveats, but just that in most cases, they shouldn’t keep you from considering the main results—as I was doing.

Am I to understand that there is an uncorrected cool bias in the tropospheric temperature observations and a warm bias in the model results. If this were the case, then I would say that there remains still much work to be done in understanding and representing the present, and perhaps we ought to get this in order before trying to forecast the future.

As far as the proportion of studies that were published last year which did or did not support a lukewarmer’s view of things, I think addressed that in my Climategate 2.0 section.

-Chip

Eric,

“The advocacy by authors of ending BAU is not a valid argument for the restriction of their data, anyone can use it for whatever purpose they want”

I have no idea what you are trying to say. People can use scientists’ data to pursue an ideological agenda if they like (as Michaels and Chip are doing), but that does not make doing so ethical or scientifically correct or morally defensible.

“if the authors are being misrepresented then that is what you should show: are they misquoted, is the data altered, etc.”

Really? I showed that the author’s work had been misrepresented (in fact Chip is trying to invert reality), read my posts above. And you are arguing strawmen– in this instance I did not claim that the authors were misquoted or altered the data. What was done was ignoring inconvenient facts and findings in the papers that did not fit their agenda. It is called lying by omission Eric, it is also called distorting, and ignoring inconvenient facts so as to push an ideological agenda.

SkS has clearly shown that Michaels and Knappenberger did doctor graphics in both Schmittner and Gillett, as well as ignoring certain key caveats:

http://www.skepticalscience.com/patrick-michaels-serial-deleter-of-inconvenient-data.html

See also here:

http://rabett.blogspot.com/2012/01/chip-clips.html

Most reasonable people would have been mortified after being called out by for misrepresenting scientists’ work and doctoring their graphs. Most reasonable people would have apologized to the authors and promptly posted corrections. Neither of those things happened. Moreover, the above post demonstrates that the distortion and misrepresentation continues unabated.

I find it very unfortunate that your appear to be siding with serial misinformers, Eric. It really does call into question the validity of your claim about being a true skeptic.

Now I intend to keep my word about not speaking to Chip, I only returned to address your moot points. I have no interest in engaging with someone who twice now has tried to change the meaning of what I said. For example, I most definitely did not suggest the following:

“Am I to understand that there is an uncorrected cool bias in the tropospheric temperature observations and a warm bias in the model results.”

No. As Santer et al. noted the the differences between the models and the satellite estimates could be an artifact of the satellite estimates having a cool bias (Mears et al. (20110) noted that the satellites still have unresolved issues), and the differences could also be attributable to several other issues that I listed in my quotes from that paper.

Another example,

“As far as the proportion of studies that were published last year which did or did not support a lukewarmer’s view of things”

I never spoke to “the proportion”– Chip is arguing a strawman. Conspiracy theories and paranoia aside, what I said was:

“papers that are consistent with the climate sensitivity reported in the IPCC’s 4th assessment (e.g., Park and Royer (2011), Pagani et al. (2010), Previdi et al. (2011), Kiehl (2011)) were simply ignored in the Knappenberger piece.”

So make that three times Chip has distorted my position or what I said.

[…] 2012Environmentalism and the Leisure Class William Tucker, American Spectator, 20 January 2012Global Lukewarming Chip Knappenberger, Master Resource, 19 January 2012Dismal Outlook for EVs on Both Sides of the […]

So, I’m curious about figure 2.

I have an application to calculate the the latest linear trends from various global temperature data sets.

Are the UAH and RSS for the lower troposphere (LT)? ( a label is appropriate ).

Are they getting the ‘Fu’ treatment?

The reason I ask is that like CRU, SST, and the MT numbers, the RSS v3.3,

as downloaded from RSS, indicates cooling since 2001 ( which should show up as 10 years on your chart ).

Also, the 33 year numbers I have vary from .13C/decade for UAH LT/RSS LT to .16 per decade for GISS.

The use of monthly versus annual averages has an effect, but I’m curious about the inputs.

Never mind – it helps to actually read the paper and realize the Santer input.

Yes It was a good year for Lukewarmers.

The simple fact is this. According to our best science the probability that sensitivity lies below 3.2C is higher than the probability that it lies above 3.2C. Our best models which average 3.2C appear to be overly sensitive. The continued use of high sensitivity models is not a wise path for winning the conversation.

albatross:

“And as for the models running warm, you also forgot to inform your readers of that the satellite estimates probably have an unresolved cool bias, again from Santer et al. (2011):

“Given the considerable technical challenges involved in adjusting satellite-based estimates of TLT changes for inhomogeneities [Mears et al., 2006, 2011b], a residual cool bias in the observations cannot be ruled out, and may also contribute to the offset between the model and observed average TLT trends.”

What you assert and what Santer wrote are two logically different things. Aruing that one cannot ‘rule out’ a cool bias, is not logically equivalent in any universe to your statement that their “probably is’ a cool bias. If there probably is a cool bias, please show the math that estimates that cool bias along with the probability that it exists.

One cannot rule out that monkeys may fly out of your butt, but that does not entail that they probably will.

If Santer believes there is a cool bias, then he should write a paper estimating that bias. Rather, he uses that uncertainty to avoid asking the real question: is 3.2C too high for the estimation of sensitivity.

Dear Chip,

Although not an expert, it has been my impression for some time that – even assuming skeptics like Lindzen and Spencer wrong – it is still hard to believe that the IPCC haven’t adopted a pessimistic outlook with respect to climate sensitivity.

However, as far as this impacts on policy, I think that even a climate sensitivity as low as 2 C would still imply that our CO2 emissions are going to cause us problems – e.g. sea level rise – if we do burn all fossil fuels before the end of the century.

So I tend only to find comfort in hoping that Lindzen is right. Do you agree with this?

Alex Harvey

Chip, I think Tamino has some good points about your analysis at this link: http://tamino.wordpress.com/2012/01/23/best-case-scenario/

I particularly agree that hoping for 1.7 C more this century as a “best case” is pretty cavalier…

Utahn,

I have responded to the analytical concerns raised by Tamino over in the comments of his article (that you linked to in #20).

Thanks for the heads up.

-Chip

What makes the Foster and Rahmstorf work particularly encouraging for lukewarmers is that the authors find that for periods of 30 years or so, the removal of natural variability makes little difference on the magnitude of the observed trend in the lower atmosphere.

I simply do not understand why this is “comforting.” Their finding simply means that natural variations have averaged to zero over the 30 years of the record. That’s exactly why climate scientists insist of a 30-yr or so calculation of trends — so that natural variations *do* average out to zero.

The remaining trend is 0.2 C/decade. That’s not reassuring. It would mean a 3 C increase in pre-industrial temperatures by 2100 — about 1/2 an ice age. And that trend is projected to increase since we are still on the lower side of CO2 warming, and feedbacks are just starting to kick in. I am baffled about how Chip finds a 3 C rise by 2100 reassuring.

In mathematics, imaginary numbers are denoted by a small i suffix.

When I see the “adjusted” I’m thinking small i imaginary.

Clearly, climate hysterics like the idea because they can project whatever they like.

But back on planet earth, the trends since 1979 for GISS. CRU, RSS-LT, UAH-LT, RSS-MT, UAH-MT, and Hadley SST are:

* all below the ‘high scenario’ IPCC trend

* all below the mid range of IPCC

* all below the ‘low scenario’ IPCC trend.

And rather than accelerating, there is even a cooling trend for the last eleven years in the data sets of: RSS-MT, RSS-LT, UAH-MT, CRU, and SST.

David Appell,

The 0.2 C per decade is the IPCC AR4 estimate for a range of emissions scenarios, I guess? (I haven’t read the Foster/Rahmstorf paper.)

Chip appears to be saying that Foster and Rahmstorf are wrong about the magnitude of the trend but may be right that removal of natural variability makes little difference to the underlying trend.

(If he didn’t believe that they are wrong about the underlying trend, he wouldn’t be a lukewarmer and wouldn’t have written this post.)

I remain curious to understand what lukewarmers believe we should do about lukewarming.

Chip,

By the way, there is another study that found lower climate sensitivity that you haven’t mentioned –

Kohler, P., R. Bintanja, H. Fischer, F. Joos, R. Knutti, G. Lohmann, and V. Masson-Delmotte, 2010: What caused

39 Earth’s temperature variations during the last 800,000 years? Data-based evidence on radiative forcing and

40 constraints on climate sensitivity. Quaternary Science Reviews, 29, 129-145.

Despite the fact that they found almost the same best guess sensitivity as Schmittner et al. 2011 (they find equilibrium climate sensitivity of between “1.4 and 5.2 K, with a most likely value near 2.4 K”), this paper received practically no press.

Nonetheless, it is discussed a great deal in the AR5 ZOD.

I find particularly interesting their conclusion,

“We can identify the changes in the radiative budget caused by individual processes. From simple principles we calculate the equilibrium temperature anomaly connected with every change in the radiative budget considering only the Planck feedback. We furthermore attach to all our assumptions uncertainty estimates and come up with both a best guess results and a potential range of variability. The temperature decline suggested by our approach for the LGM is without the feedbacks of water vapour, lapse rate and clouds -3.1 K to -4.7 K. If we assume that the strenght of these additional feedbacks is independent from climate and can be derived from 2xCO2 experiments in climate models, then a much larger temperature range of -6.4 K to -9.6 K for the LGM is suggested. This range exceeds other temperature estimates but its lower end is consistent with reconstructed LGM temperatures (e.g. Farrera et al., 1999; Ballantyne et al., 2005; Schneider von Deimling et al., 2006b; Masson-Delmotte et al., in this issue).”

If they had stopped here, I would have guessed they were going to find very low sensitivity. However, they then estimate climate sensitivity by assuming a known change in temperature and change in radiative forcing. They assume a known temperature change of 5.8 +/- 1.4 K after Schneider von Deimling et al. 2006 (where one of the Schneider von Deimling co-authors is Stefan Rahmstorf). Only then do they derive a best guess climate sensitivity of 2.4 K.

“I remain curious to understand what lukewarmers believe we should do about lukewarming.”

While I identify with the label, there is no consensus organization of ‘lukewarmers’.

My take on lukewarming, however, is that a small amount of warming is certainly tolerable and likely beneficial to both humans and ecosystems.

The Holocene Climatic Optimum ( or ‘Hypsithermal’ ) is not a pure allegory to CO2 driven luke warming but it is an important case study.

We know from orbital mechanics that northern hemisphere summers we much longer and encountered much more direct sunshine ( by about 50W/m^2 at high latitudes, tapered to lower differences at low latitudes ).

We also know that northern winters received less solar energy during the HCO.

We also know that the northern hemisphere dominates global temperatures because of the preponderance of land is in the NH.

We further know that the iconic advance of human civilization – the ‘Cradle of Civilization’ Mesopotamian Era – corresponded to the HCO.

Our ancestors didn’t ‘do’ anything about this natural climate change.

We shouldn’t ‘do’ anything about this modern era of milder climate.

Climate Watcher,

What do you think about the extinctions issue? While the earth has certainly experienced periods warmer than the present, and these may have been beneficial to life as you say, I am not able to convince myself that climate has ever changed as fast as it is predicted to so over the course of the present century. Do you have a good reason to believe that ecosystems can adapt to a possibly unprecedented rate of change of surface temperature?

Alex,

I don’t believe there is an extinction issue.

Why? Because species necessarily evolved through numerous glacial and interglacial cycles. If temperature variation were so significant, species so vulnerable would have ( and may have ) become extinct long ago, leaving only tolerant species today.

I have seen reference to warming rate being significant, but I cannot understand why a degree per century should effect ecosystems when many other natural variations are of much greater rates.

The diurnal cycle is not climate, but all natural above ground species are exposed to it. I live in a desert with a much greater variation (20C) on a daily basis, but lets assume a lower rate for more humid climates of 10C.

So each day there is roughly 10C of warming followed by 10C of cooling.

So on a comparable scale, each morning there is 10C per 1/2 day.

To put the rate in comparable terms for AGW, the per century rate is

10C * 365 / (1/2) * 100

That means the rate of morning warming is 730,000 C per century.

The three decade long Hadley global temperature trend is 1 C per century.

Clearly the -rate- of change is not that disruptive.

Similar change from winter to summer, from ahead of the cold front to behind the cold front, from El Nino year to La Nina year all are of much greater rate ( and largely of greater extent ) than AGW.

The poster animal for AGW harm is the polar bear, but we know from orbital mechanics and fossils that during the Eemian and the Holocene Climatic Optimum that summer sunshine was some 50Watts per m^2 more intense on the Arctic and that northern hemisphere summers were much longer, and that the tree line extended to the Arctic coast. Even with this much less icy Arctic:

http://www.ncdc.noaa.gov/paleo/globalwarming/images/polarbigb.gif

the polar bears are with us today.

So, rather than ecosystems adapting, I believe the evolutionary past indicates most species have evolved to tolerate a large variability in climate already within their genes.

But further, I don’t believe the rate of change, even in climatic ( not diurnal or annual ) terms is unprecedented.

I track the major global temperature sets, including the Hadley CRU.

The modern trend ( since 1979 ) is actually slightly less than the trend from 1910 through 1945. You can see that here:

http://www.cru.uea.ac.uk/cru/info/warming/gtc.gif

I do believe the significance, particularly of the luke warming, is greatly exaggerated.

So, put me down as yes – global warming is real and in principle a result of carbon emissions, but no – there is not a big danger associated with it.

In Foster (Tamino) & Ramstorf, I do not see the trend line with slope on the graph. If you look at the average position of the curves at the start and end, it looks as though the “corrected” temp.’s went up about 0.45 C over 30 years. So in the roughly 90 years until 2100, it should go up about 3X that amount or less than 1.5 C Using the pre-industrial temp. to try to say it will go up 3C is asinine as it double counts what has already occurred.

But that 1.5 C is the trend if there are not volcanoes or other cooling events that Tamino/Foster removed to get that graph. Which suggests to me that the warming could easily be delayed, even if we accept that is mostly due to CO2 and “in-the-pipeline”. So that gives us more than 100 years to try to come up with a fix. Which really should make it ok to study it another 10-15 years to see if temp.’s start to follow the models or jump ahead of them or if we have a longer hiatus in warming or even a slight cooling.

These last 13 years with little warming has at least bought us another 13 years of study. Why the insane panicked rush to waste money on more Solyandra’s now just in case the temp. goes up a few degrees? All our politics and much of our environmentalism is fear-based. One side wants to attack Iran and the other wants to panic over a potential climate threat but both want to waste trillions of dollars and do it NOW.

[…] to the question: Just how well do the prevailing climate models describe the observations? In a January 2012 post at MasterResource, published climate scientist Chip Knappenberger discusses the 2011 paper of Dr. Benjamin Santer […]

[…] to the question: Just how well do the prevailing climate models describe the observations? In a January 2012 post at MasterResource, published climate scientist Chip Knappenberger discusses the 2011 paper of Dr. Benjamin Santer […]

[…] Knappenberger has a post at Master Resource entitled “Global Lukewarming: A Great Intellectual Year in 2011“. An extensive overview of recent data and research from the lukewarmer […]

[…] temperature rise towards the low end of this range is not worth worrying too much about (the ‘lukewarming’ position), while a rise near the higher end of the range is potentially much more problematic (the alarmist […]

[…] temperature rise towards the low end of this range is not worth worrying too much about (the ‘lukewarming’ position), while a rise near the higher end of the range is potentially much more problematic (the alarmist […]

[…] warming continues the plodding 0.14°C per decade warming trend of the past 33 years. These data call into question the climate sensitivity assumptions underpinning the big scary warming projections popularized by NASA scientist James Hansen, the UN […]

[…] even this number may be on the high side if the climate sensitivity is lower than about 3°C (see here for more on recent findings concerning the climate […]

[…] year ago, in this space, I highlighted some positive lukewarmer developments in 2011. These included findings that the observed […]

[…] year ago, in this space, I highlighted some positive lukewarmer developments in 2011. These included findings that the observed temperature […]

[…] year ago, in this space, I highlighted some positive lukewarmer developments in 2011. These included findings that the observed […]

[…] temperature rise towards the low end of this range is not worth worrying too much about (the ‘lukewarming’ position), while a rise near the higher end of the range is potentially much more problematic (the alarmist […]