“The Great Climate Debate” at Rice University: The Science is NOT Settled (Richard Lindzen and Gerald North to Revisit the IPCC ‘Consensus’)

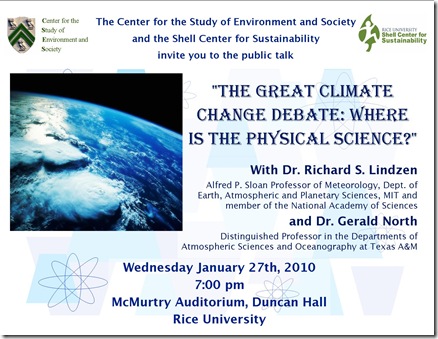

By Robert Bradley Jr. -- January 25, 2010On Wednesday evening January 27th a discussion of the latest developments in climate change science will be held on the campus of Rice University (directions below for those nearby). This discussion/debate is cosponsored by the Shell Center for Sustainability and the Center for the Study of Environment and Society at Rice. Here is the flyer:

Defending the IPCC consensus regarding natural-versus-anthropogenic climate change is Gerald R. North, Distinguished Professor of the Physical Section, Department of Atmospheric Sciences and the Department of Oceanography at Texas A&M University.

Richard S. Lindzen, the Alfred P. Sloan Professor of Meteorology at the Massachusetts of Technology, will challenge the IPCC consensus, arguing that real-world climate sensitivity lies below the iconic range of 2c–4.5C. Questions about ‘Climategate’ and the newly emerged ‘Himalayangate’ (the latter exposed by Dr. North’s Texas A&M colleague, John Nielsen-Gammon) are expected to be covered in the question/answer period after the scientists’ formal 30-minute presentations.

[DIRECTIONS McMurtry Auditorium is located in Duncan Hall. Visitor parking is available to anyone with a credit card. Visitor Parking “L” and Founder’s Court Visitor are the closest to Duncan Hall, in particular using the Rice main entrance on South Main Street at Sunset Blvd. Another parking lot is the North Lot, 5-8 min walk to Duncan Hall, on Rice blvd using entrance # 21 or 20.

Rice campus map: http://www.rice.edu/maps/maps.html]

Having this climate debate is very good news. The last climate science debate at Rice University was in the summer of 2000 at the James A. Baker Institute. Therein lies a story….

The 2000 Conference: The End of Open Science Debate

I attended this well-attended conference. It was fair and balanced in its different dimensions with ‘skeptics’ of climate alarmism present such as Pat Michaels on the physical science; MasterResource’s own Ken Green on public policy; and Senator Chuck Hagel (R-Nebraska) on politics.

What I remember most is talking to James Hansen and his remarking that anthropogenic global warming could save us from a new ice age. In his own eyes, he was making an ironic point about high climate sensitivity; to me, he was making the point that extreme scenarios can work in both directions–good and bad.

A summary of the conference carefully balanced out the alarmist and skeptical views of the issues. I reproduce it in Appendix A below because of its historical significance given where the science, economics, and policy issues are nearly ten years later.

The Non-Publication of the 2001 Conference Proceedings: Neal Lane Returns to Rice

Neal Lane, assistant to the president of the United States for science and technology, director of the U.S. Office of Science and Technology Policy, and former director of the National Science Foundation, has rejoined the faculty at Rice University.

Lane, 62, returned to Rice from the Clinton White House to take the position of University Professor in the Department of Physics and Astronomy and senior fellow at the James A. Baker III Institute for Public Policy. University Professor is a special appointment entitling the holder to teach in any department in the university. Lane is the only person ever to hold the position at Rice.

“This is another signal day for Rice University, as we welcome back our colleague and faculty member Neal Lane, who has served his country with such distinction in several vital national positions,” Rice president Malcolm Gillis said.

“I have had the privilege to serve in the Clinton–Gore administration for more than seven years and now am excited to be coming home to Rice, where my wife, Joni, and I have so many friends,” Lane said. “I look forward to teaching again and working with Rice’s outstanding students and faculty on physics research and science and technology policy.”

“Neal Lane will make a significant contribution to the Baker Institute’s future research and programs on science, technology and engineering issues,” said Director Edward Djerejian. “He will, in effect, be a natural bridge between the Baker Institute and The Wiess School of Natural Sciences and The George R. Brown School of Engineering….

In his most recent role, Lane provided the president with advice in all areas of science and technology policy, and he coordinated policy and programs across the federal government. He also cochaired the president’s Committee of Advisers on Science and Technology Policy and managed the president’s National Science and Technology Council….

Dr. Lane also blocked a scheduled talk by Bjørn Lomborg at the Baker Institute in 2004. Instead, Lomborg was allowed to speak at the business school before an enthusiastic crowd of 200. Dr. Lane was following the Holdren line that Lomborg was an anti-science propagandist, a view that I have rebutted in a 2003 piece, The Heated Energy Debate.

History may harshly judge Neal Lane’s academic censorship; after all, a fair debate on a VERY contentious issue has been precluded for many years for students and members of the public who trust the Baker Institute. And the question must be asked: would James A. Baker himself approve of this bias coming from one gatekeeper?

A New Beginning for Lane/the Baker Institute?

Perhaps, just perhaps, the new scientific and political climate will inspire the Baker Institute to join the other campus groups Shell Center for Sustainability and the Center for the Study of Environment and Society in open, two-sided debate. I hope this Wednesday night’s discussion will be well attended and will inspire a new beginning in this regard.

APPENDIX: “GLOBAL WARMING: SCIENCE AND POLICY” CONFERENCE

As published in the January 2001 “Baker Institute Report” (no. 15)

Conference coordinators were Peter Hartley, chairman of economics; Andre Droxler, associate professor of geology and geophysics; and Kenneth Medlock, Baker Institute Scholar.

SUMMARY: “The following questions were identified as important to [the physical science debate]:

• What explains the divergence between measured temperature

trends at different levels of the atmosphere and in different

hemispheres or locations? Are some of the measures faulty, or

do the GCMs need to be modified to explain real differences?• What does change in the stratosphere imply about climate at

the surface?• What role do oceans play, both as a sink and as a global thermostat,

and how can they be included better in climate models?• What is the role of the sun in the earth’s climate?

• How might clouds be included in models better than they are at

present?• What does the geological record tell us about global warming?

• Can the development of better geological records help predict

rapid (a decade or less) changes in climate?• What are the odds of a sudden catastrophic event, and what

might the warning signs be?• How does CO2 compare with soot and other GHGs as a source

of temperature change?”

The scientific, economic, and political issues surrounding global warming were debated extensively a a Baker Institute conference September 6–8, 2000. Titled “Global Warming: Science and Policy,” the conference featured experts from diverse fields, such as atmospheric physics, astronomy, biology, economics, geology, oceanography, and politics, who offered their perspectives on this contentious scientific and political issue.

A central question of the conference was to what extent human activity affects climates at the global level. The costs and benefits of possible climate change were discussed, along with the geographic distribution of those effects. The feasibility and costs of mitigating human influences on the global climate also were considered.

The accumulation in the atmosphere of greenhouse gases (GHGs), such as carbon dioxide (CO2), now is seen as a greater threat to human welfare than are air and water pollution. Unlike many air or water pollutants, CO2 emissions are an unavoidable byproduct of fossil fuel combustion and, therefore, of modern economic activity. Over the past 100 years, industrial activity, the demand for electricity, and the demand for transportation services have

increased exponentially. The degree to which humans rely on fossil fuels is indicated by the fact that in 1997 fossil fuels provided about 86 percent of primary energy requirements globally. As a result, since 1958 the concentration of CO2 in the atmosphere has risen about 14 percent and is now about 30 percent above pre-industrial levels.

Furthermore, the Intergovernmental Panel on Climate Change (IPCC) currently estimates that CO2 concentrations will rise during the next century to a level 90 percent above pre-industrial levels.

Although CO2 does not harm humans in the way that nitrous or sulfurous oxides do, some argue that the accumulation of carbon dioxide in the atmosphere will warm the earth’s climate. A positive

correlation, since about 1970, between the rising atmospheric concentration of CO2 and rising average temperatures has led [to the] … hypothesis, referred to as the “greenhouse effect,” [in that] CO2 and other greenhouse gases absorb some of the infrared radiation that is emitted from the earth’s surface after the sun warms it. This, in turn, warms the atmosphere, thereby increasing the amount of water vapor. Increased water vapor then can amplify the effect of CO2 to produce noticeable temperature increases.

The fact that most warming is caused by higher humidity explains why the largest predicted temperature increases are at night in the cold winter air masses found in the polar regions. Since the coldest air masses are also the driest, they experience the largest percentage increases in humidity.

In contrast, increased humidity at tropical and temperate latitudes produces an increase in cloud cover, which tends to cool the atmosphere by reflecting incoming solar radiation. At temperate latitudes, higher humidity increases winter snowfall, which again reduces the absorption of incoming solar radiation.

Many factors apart from the role of water vapor were identified to complicate the modeling of global climates. For example, the net effects of the initial increase in temperature produced by CO2 are complicated by interactions between the atmosphere and the oceans. In particular, the oceans’ ability to store heat, and thereby regulate the earth’s climate, is largely unknown. There also is much to learn about the effects of upper atmospheric disturbances

on the climate. For example, ozone depletion and changes in stratospheric winds were pointed to as having significant effects on climate.

Another complication is that increased CO2 can stimulate plant growth and, more generally, biosphere productivity. Since carbon compounds form a large part of living organisms, an expansion of the biosphere would tend to reduce CO2 concentrations in the atmosphere.

The existence of these types of competing factors makes the likely consequences of increased greenhouse gas concentrations difficult to predict. The only feasible way of making such predictions is to build complicated global computer models (the GCMs) that simulate the interactions among the various processes.

There is general agreement that the earth’s surface has been warming in recent decades, but uncertainty remains as to how much warming has resulted from increased CO2 and how much warming has resulted from other forcing phenomena. For example, it was argued that variation in solar activity could explain much of what has been observed in the surface temperature record. In addition, it was argued that control of greenhouse gases that are more potent than CO2 could be the most effective and easily implemented means of combating global warming.

There also is considerable uncertainty about the degree to which temperatures will rise over the next century. An increase in average temperatures of 2.o C by 2100 was the median projection in the 1995 IPCC report. This figure is 23 percent below the IPCC’s 1992 median projection, 38 percent below its 1990 median projection, and 75 percent below the figure projected at the Toronto conference of 1988, the year the IPCC was created. The instability

of the projections (a 75 percent drop in seven years, and almost 40 percent in five) is an indication of the uncertainty of climate science.

In fact, it was recognized throughout the conference that today’s GCMs are inadequate, as indicated by the wide variability in the predictions of different models. However, it was argued that there is something to be learned by the fact that all of the GCMs are broadly consistent in predicting a warming trend.

The damage caused by global warming, were it to eventuate, could be considerable. For example, melting of land-based polar ice caps, combined with thermal expansion of the world’s oceans, could raise sea levels and flood many of the world’s port cities. In addition, adjusting to rising sea levels could be difficult because

the change could occur abruptly. Initially, warming may cause a gradual melting of ice, but if large chunks of land-based ice fall into the ocean, they will melt more rapidly. Apart from the impact this would have on sea levels, the resulting influx of fresh water into the oceans could affect the circulation of ocean currents, producing further changes in climates.

There is geological evidence that suggests the world’s climate can switch rapidly from one stable state to another. Damage is likely to be greater when changes occur abruptly. The amount of CO2 that must accumulate before a catastrophic event would occur, however, is unknown. The timing and severity of any potential damage, therefore, are also difficult to predict.

Despite the uncertainties surrounding the causes and ramifications of global warming, governments are being urged to act. An international agreement, known as the Kyoto Protocol and as of yet to be ratified by any of its signatories, calling for the reduction of greenhouse gas emissions was signed in 1997. The Kyoto Protocol specifies a greenhouse gas emissions target of between 5 percent and 8 percent below 1990 levels by 2008–2012 for a group of industrialized nations (referred to as Annex I countries). Carbon taxes or direct controls could be used to achieve these targets, but they are likely to be very costly. Costs also will be higher the faster controls are enforced since reducing emissions in the short term generally requires reducing production, causing some degree of capital obsolescence.

Relatively low-cost methods of control, such as land use changes, the clean-development mechanism (CDM), and emissions permit trading, have been proposed, but methods of implementation have yet to be worked out. The methods of control and the speed of enforcement will determine the magnitude of the costs of compliance. Countries also may incur lower costs if weak enforcement allows controls to be evaded.

Modeling the economic cost of taking CO2 abatement measures is just as difficult as modeling the climate. Uncertainty pervades the exercise, due to a number of problems. The lack of clearly defined guidelines for reducing CO2 emissions, an inadequate understanding of the potential of new technologies, and more conventional problems of projecting economic growth, the composition of fossil fuel use, and projecting energy prices each contributes to this uncertainty.

Therefore, while we cannot be certain whether or not global warming is an immediate and serious threat, we also cannot be certain about the economic costs of taking steps to eliminate an uncertain threat.

A potential solution to the global warming problem lies in the wake of the development and implementation of new technologies. Energy sources such as solar power, fuel cells, hybrid technologies, and so forth could greatly increase efficiency of fossil fuel use or could eliminate it altogether. Computer technologies also have the potential to increase energy efficiency by more adequately regulating manufacturing facilities and the like. This could considerably reduce CO2 emissions without imposing high economic cost. However, the time horizon for cost competitive implementation is uncertain, and, if too far into the future, damages from global warming could be high.

In addition to the panel of experts in their respective fields at the conference, four keynote speakers addressed the participants and attendees, each presenting his own perspective on the issues at hand.

Neal Lane, assistant to the U.S. president for science and technology and director of the Office of Science and Technology Policy, addressed the difficulties with reconciling science and policy. He noted that science must advance in understanding how climate has changed in the past and how it will change in the future so that an accurate assessment of human activity can be made. He recognized the large amount of uncertainty in predicting future climates but presented evidence of the correlation between global temperature and atmospheric CO2 levels.

Lane also presented possible climate-change impacts on different regions of the U.S., as predicted by different GCMs, that are, as he argued, aimed at raising the awareness of groups and individuals. He also stressed the global nature of climate change and argued that the largest impact on the U.S. could come from climate-related disruptions in other parts of the world.

Finally, Lane recognized that policy will move forward, as it operates on a different time frame than does science. So, the best possible science needs to be communicated to policymakers as it becomes available.

Richard Burt, former ambassador to the Federal Republic of Germany and assistant secretary of State for Europe, addressed issues of international sovereignty. Recognizing that a global agreement to abate CO2 emissions will require the formation of an international regulatory body, he questioned if governments should cede power to a new system of global governance. He argued that a United Nations-type body would be inefficient and, perhaps more important, would hold no democratic accountability. Thus, Burt claimed, any such body that attempted to wield power would be rejected, particularly in the U.S., because it is anti-democratic in nature.

A more suitable model, he added, would be one in which control and enforcement was instituted at a national level so that sovereignty is not infringed.

U.S. Senator Charles Hagel (Nebraska) argued for a new approach to the issue of climate change. Citing the ongoing debates within the scientific community and the lack of a definitive consensus prediction of climate change, he claimed that radical and swift action to abate CO2, such as that called for in the Kyoto Protocol, is unnecessary. Hagel also added that such action could cause detriment to the U.S. economy that outweighs any benefit.

He argued instead for a cautious approach that would allow for the advancement of climate science while preserving economic well-being.

Robert Curl, the Harry C. and Olga K. Wiess Professor of Natural Sciences at Rice and a 1996 Nobel laureate for chemistry, acknowledged that science often identifies problems that require a policy response but offers no clear means of dealing with those problems. According to Curl, the global warming issue is just one in a number of such issues. He emphasized the need for consensus within the scientific community so that clear policy direction can be

formed. He recognized, as Lane did, that policy will move forward regardless of the state of science; thus, accurate and sound science is all the more necessary. Although a clear policy agenda did not emerge from the conference, substantial agreement was reached on several points. Better measures of temperatures at ground level are a top policy priority and could be attained relatively cheaply.

thoroughly the predictions of computer models of the earth’s atmosphere.

Better models will require improved understanding of the various interactions within the climate system….

Any chance they will make videos of the talks, or powerpoints, available?

Thanks for this information, Rob. The Baker Institute Report is indeed an important historical document, one I hadn’t before read. Two quoted passages from it are, to me, even more salient today, since they distill much of the issue’s essence:

“Modeling the economic cost of taking CO2 abatement measures is just as difficult as modeling the climate. Uncertainty pervades the exercise, due to a number of problems. The lack of clearly defined guidelines for reducing CO2 emissions, an inadequate understanding of the potential of new technologies, and more conventional problems of projecting economic growth, the composition of fossil fuel use, and projecting energy prices each contributes to this uncertainty.”

“Finally, Lane recognized that policy will move forward, as it operates on a different time frame than does science. So, the best possible science needs to be communicated to policymakers as it becomes available.”

Here is some local media coverage.

From Rice University: http://www.media.rice.edu/media/NewsBot.asp?MODE=VIEW&ID=13619

From Eric Berger of the Houston Chronicle: http://blogs.chron.com/sciguy/archives/2010/01/post_134.html?utm_source=feedburner&utm_medium=feed&utm_campaign=Feed:+houstonchronicle/sciguy+(SciGuy)

Well, I had to find it myself but if anyone is interested, here is the video:

http://wmdp.rice.edu/Centers/CSES/ClimateChg-27Jan10/ClimateChg-27Jan10.mp4

I found it a little jerky but I think that’s my computer.

[…] to host a climate forum/debate in 2010 between Gerald North (Texas A&M) and Richard Lindzen (MIT), which ended up being […]

Conflict of clans gemmes illimitées va trop révolutionner

le facon para voir votre jeux ainsi que de l’apprecier.

[…] to host a climate forum/debate in 2010 between Gerald North (Texas A&M) and Richard Lindzen (MIT), which ended up being […]