Is the Climate Science Debate Over? No, It’s Just Getting Very Interesting (with welcome news for mankind)

By Marlo Lewis -- July 24, 2009How many times have you been told that the debate on the science of climate change is “over”? Probably almost as many times as Al Gore has traveled in private jets and limousines to urge audiences to repent of their fuelish ways.

Although tirelessly intoned by politicians, major media, advocacy groups, academics, and even some Kyoto critics, the “debate is over” mantra is just plain false. The core issues of climate-change attribution, climate sensitivity, and even anthropogenic detection remain very much in play.

Detection

The world has warmed overall during the past 130 years, as evidenced by melting glaciers, longer growing seasons, and both proxy and instrumental data. However, the main era of “anthropogenic” global warming supposedly began in the mid-1970s, and ongoing research by retired meteorologist Anthony Watts leaves no doubt that in recent decades, the U.S. surface temperature record–reputed to be the best in the world–is unreliable and riddled with false warming biases.

Watts and a team of more than 650 volunteers have visually inspected and photographically documented 1003, or 82%, of the 1,221 climate monitoring stations overseen by the U.S. Weather Service. In a report summarizing an earlier phase of the team’s investigation (a survey of 860+ stations), Watts says, “We were shocked by what we found.” He continues:

We found stations located next to exhaust fans of air conditioning units, surrounded by asphalt parking lots and roads, on blistering-hot rooftops, and near sidewalks and buildings that absorb and radiate heat. We found 68 stations located at wastewater treatment plants, where the process of waste digestion causes temperatures to be higher than in surrounding areas.

In fact, we found that 89 percent of the stations–nearly 9 of every 10–fail to meet the National Weather Services’s own siting requirements that stations must be 30 meters (about 100 feet) or more away from an artificial heating or radiating/reflecting heat source. In other words, 9 or every 10 stations are likely reporting higher or rising temperatures because they are badly sited.

“It gets worse,” Watts continues:

We observed that changes in the technology of temperature stations over time also have caused them to report a false warming trend. We found gaps in the data record that were filled in with data from nearby sites, a practice that propagates and compounds errors. We found adjustments to the data by both NOAA and another government agency, NASA, cause recent temperatures to look even higher.

How big a problem is this? According to Watts, “The errors in the record exceed by a wide margin the purported rise in temperature of 0.7ºC (about 1.2ºF) during the twentieth century.” Based on analysis of 948 stations rated as of May 31, 2009, Watts estimates that 22% of stations have an expected error of 1ºC, 61% have an expected error of 2ºC, and 8% have an expected error of 5ºC.

Watts concludes that, “this record should not be cited as evidence of any trend in temperature that may have occurred across the U.S. during the past century.” He further concludes: “Since the U.S. record is thought to be ‘the best in the world,’ it follows that the global database is likely similarly compromised and unreliable.”

A related issue is the influence of urban heat islands on long-term temperature records. Climate Change Reconsidered, a report by the Nongovernmental International Panel on Climate Change (NIPCC), written by Drs. Craig Idso and S. Fred Singer with 35 contributors and reviewers, reviews more than 40 studies on urban heat islands. For example, a study by Oke (1973) of the urban heat island strength of 10 settlements in the St. Lawrence Lowlands of Canada found that a population as small as 1,000 people could generate a heat island effect of 2ºC-2.5ºC. From this study and the others reviewed, the NIPCC concludes:

It appears almost certain that surface-based temperature histories of the globe contain a significant warming bias introduced by insufficient corrections for the non-greenhouse-gas-induced urban heat island effect. Furthermore, it may well be impossible to make proper corrections for the deficiency, as the urban heat island of even small towns dwarfs any concommitant augmented greenhouse effect that may be present [p. 95; emphasis in original].

Obviously, temperature data are the starting point of any analysis of global warming. But if we can’t trust the U.S. and IPCC temperature records, how do we know how much global warming has actually occurred?

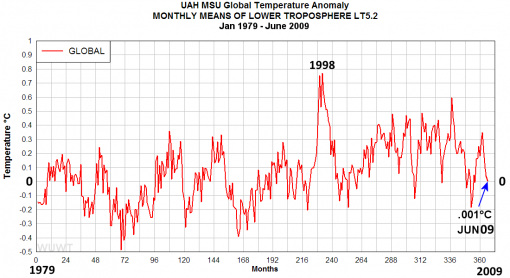

Satellite observations are not influenced by heat islands or subject to the quality control problems detailed by Watts, and satellite records tally well with weather balloon observations–an independent database. However, the “debate is over” crowd is unlikely to embrace this solution. The satellite record shows a relatively slow rate of warming–about 0.13ºC per decade–hence a relatively insensitive climate.

Moreover, as can be seen in the above chart of the University of Alabama-Huntsville (UAH) satellite record, some of the 0.13ºC/decade “trend” comes from the 1998 El Nino warming pulse. Remove 1998, and the 30-year satellite record trend drops to 0.12ºc/decade.

Attribution

The IPCC, the leading spokesman for the alleged scientific consensus, claims that, “Most of the observed increase in global average temperatures since the mid-20th century is very likely due to the observed increase in anthropogenic greenhouse gas concentrations.” How does the IPCC know this? The IPCC offers three main reasons.

First, according to the IPCC, “Paleoclimate reconstructions show that the second half of the 20th century was likely the warmest 50-year period in the Northern hemisphere in 1300 years” (IPPC AR4, WGI, Chapt. 9, p. 702). The warmth of recent decades coincided with a rapid increase in GHG concentrations. Therefore, the IPCC reasons, most of the recent warming is likely due to anthropogenic GHG emissions.

This argument is unpersuasive if the warming of recent decades is not unusual or unprecedented in the past 1300 years. As it happens, numerous studies indicate that the Medieval Warm Period (MWP)–roughly the period from AD 800 to 1300, with peak warmth occurring about AD 1050–was as warm as or warmer than the Current Warm Period (CWP).

The Center for the Study of Carbon Dioxide and Global Change has analyzed more than 200 peer-reviewed MWP studies produced by more than 660 individual scientists working in 385 separate institutions from 40 countries. The Center divides these studies into three categories–those with quantitative data enabling one to infer the degree to which the peak of the MWP differs from the peak of the CWP (Level 1), those with qualitative data enabling one to infer which period was warmer (Level 2), although not by how much, and those with data enabling one to infer the existence of a MWP in the region studied (Level 3). An interactive map showing the sites of these studies is available at CO2Science.org.

Only a few Level 1 studies determined the MWP to have been cooler than the CWP; the vast majority indicate a warmer MWP. On average, the studies indicate that the MWP was 1.01ºC warmer than the CWP.

Figure Description: The distribution, in 0.5ºC increments, of Level 1 studies that allow one to identify the degree to which peak MWP temperatures either exceeded (positive values, red) or fell short of (negative values, blue) peak CWP temperatures.

Similarly, the vast majority of Level 2 studies indicate a warmer MWP:

Figure Description: The distribution of Level 2 studies that allow one to determine whether peak MWP temperatures were warmer than (red), equivalent to (green), or cooler than (blue), peak CWP temperatures.

The IPCC’s second main reason for attributing most recent warming to the increase in GHG concentrations is that climate models “cannot reproduce the rapid warming observed in recent decades when they only take into account variations in solar output and volcanic activity. However . . . models are able to simulate observed 20th century changes when they include all of the most important external factors, including human influences from sources such as greenhouse gases and natural external factors” (IPCC, AR4, Chapt. 9, p. 702).

This would be decisive if today’s models accurately simulate all important modes of natural variability. In fact, models do not accurately simulate the behavior of clouds and ocean cycles. They may also ignore important interactions between the Sun, cosmic rays, and cloud formation.

Richard Lindzen of MIT spoke to this point at the Heartland Institute’s recent (June 2, 2009) Third International Conference on Climate Change:

What was done [by the IPCC], was to take a large number of models that could not reasonably simulate known patterns of natural behavior (such as ENSO, the Pacific Decadal Oscillation, the Atlantic Multi-Decadal Oscillation), claim that such models nonetheless adequately depicted natural internal climate variability, and use the fact that models could not replicate the warming episode from the mid seventies through the mid nineties, to argue that forcing was necessary and that the forcing must have been due to man. The argument makes arguments in support of intelligent design seem rigorous by comparison.

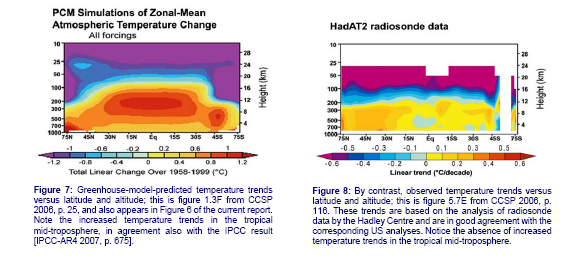

“Fingerprint” studies are the third basis on which the IPCC attributes most recent warming to anthropogenic greenhouse gases. Climate models project a specific pattern of warming through the vertical profile of the atmosphere–a greenhouse “fingerprint.” If the observed warming pattern matches the model-projected fingerprint, that would be strong evidence that recent warming is anthropogenic. Conversely, notes the NIPCC, “A mismatch would argue strongly against any signficant contribution from greenhouse gas (GHG) forcing and support the conclusion that the observed warming is mostly of natural origin” (NPICC, p. 106).

Douglass et al. (2007) compared model-projected and observed warming patterns in the tropical troposphere. The observed pattern is based on three compilations of surface temperature records, four balloon-based records of the surface and lower troposphere, and three satellite-based records of various atmospheric layers–10 independent datasets in all.

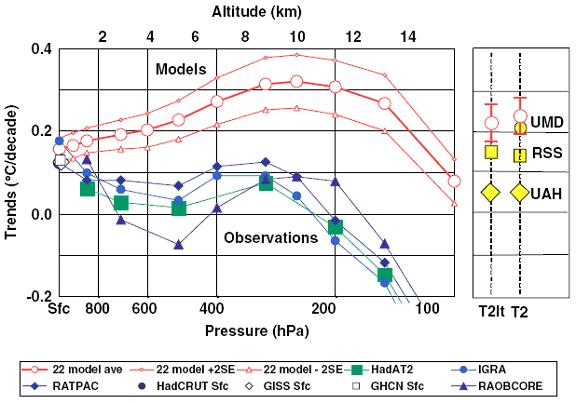

“While all greenhouse models show an increasing warming trend with altitude, peaking around 10 km at roughly two times the surface value,” observes the NIPCC, “the temperature data from balloons give the opposite result; no increasing warming, but rather a slight cooling with altitude” (p. 107). See the figures below.

The mismatch between the model-predicted greenhouse fingerprint and the observed pattern is profound. As the Douglass team explains: “Model results and observed temperature trends are in disagreement in most of the tropical troposphere, being separated by more than twice the uncertainty of the model mean. In layers near 5 km, the modeled trend is 100% to 300% higher than observed, and above 8 km, modeled and observed trends have opposite signs.”

Source: Douglass et al. (2007). Temperature trends for statellite era (ºC/decade). HadCRUT, GHCH and GISS are compilations of surface temperature observations. IGRA, RATPAC, HadAT2, and RAOBCORE are balloon-based observations of surface and lower troposphere. UAH, RSS, UMD are satellite-based data for various layers of the atmosphere. The 22-model average comes from an ensemble of 22 model simulations from the most widely used models worldwide. The red lines are the +2 and -2 standard errors of the mean from the 22 models.

The NIPCC concludes that the mismatch of observed and model-calculated fingerprints “clearly falsifies the hypothesis of anthropogenic global warming (AGW)” (p. 108). I would put the state of affairs more cautiously. In view of (1) significant evidence that the MWP was as warm as or warmer than the CWP, (2) the inability of climate models to simulate important modes of natural variability, and (3) the failure of observations to confirm a greenhouse fingerprint in the tropical trosophere, the IPCC claim that “most” recent warming is “very likely” anthropogenic should be considered a boast rather than a balanced assessment of the evidence.

Climate Sensitivity

The most important unresolved scientific issue in the global warming debate is how sensitive (reactive) the climate is to increases in GHG concentrations.

Climate sensitivity is typically defined as the global average surface warming following a doubling of carbon dioxide (CO2) concentrations above pre-industrial levels. The IPCC says a doubling is likely to produce warming in the range of 2ºC to 4.5ºC, with a most likely value of about 3ºC. The IPCC presents a range rather than a specific value because of uncertainty regarding the strength of the relevant feedbacks.

In a hypothetical climate with no feedbacks, positive or negative, a CO2 doubling would produce 1.2ºC of warming (IPCC, AR4, WGI, Chapt. 8, p. 631). In most climate models, the dominant feedbacks are positive, meaning that the warmth from rising GHG levels causes other changes (in water vapor, clouds, or surface reflectivity, for example) that either increase the retention of outgoing long-wave radiation (OLR) or decrease the reflection of incoming short-wave radiation (SWR).

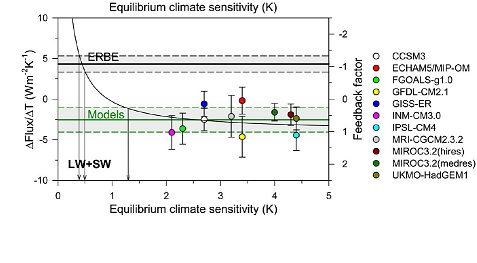

In his speech at the June 2 Heartland Institute conference, Professor Lindzen summarized his research on climate sensitivity, which has since been accepted for publication by Geophysical Research Letters. Lindzen argues that climate feedbacks and sensitivity can be inferred from observed changes in OLR and SWR following observed changes in sea-surface temperatures. For fluctuations in OLR and SWR, Lindzen and his colleagues used the 16-year record (1985-1999) from the Earth Radiation Budget Experiment (ERBE), as corrected for altitude variations associated with satellite orbital decay. For sea surface temperatures, they used data from the National Centers for Environmental Prediction. For climate model simulations, they used 11 IPCC models forced with the observed sea-surface temperature changes.

The results are striking. All 11 IPCC models show positive feedback, “while ERBE unambiguously shows a strong negative feedback.” The ERBE data indicate that the sensitivity of the actual climate system “is narrowly constrained to about 0.5ºC,” Lindzen estimates.

At the Heartland Institute’s Second International Conference on Climate Change (March 2009), Dr. William Gray of Colorado State University presented satellite-based research that may explain the low climate sensitivity the Lindzen team infers from the ERBE data.

The IPCC climate models assume that CO2-induced warming significantly increases upper troposphere clouds and water vapor, trapping still more OLR that would otherwise escape to space. Most of the projected warming in the models comes from this positive water vapor/cloud feedback, not from the CO2. Satellite observations do not support this hypothesis, Gray contends:

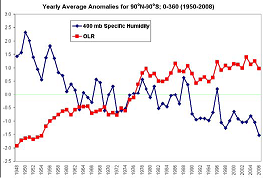

Observations of upper tropospheric water vapor over the last 3-4 decades from the National Centers of Environmental Prediction/National Center for Atmospheric Research (NCEP/NCAR) reanalysis data and the International Satellite Cloud Climatology Project (ISCCP) data show that upper tropospheric water vapor appears to undergo a small decrease while Outgoing Longwave Radiation (OLR) undergoes a small increase. This is the opposite of what has been programmed into the GCMs [General Circulation Models] due to water vapor feedback.

The figure below comes from the NCEP/NCAR reanalysis of upper troposphere water vapor and OLR.

NCEP/NCAR renalysis of standardized anomalies at 400 mb (~7.5 km altitude) water vapor content (i.e. specific humidity — in blue) and OLR (in red) from 1950 to 2008. Note the downward trend in moisture and upward trend in OLR.

Gray’s paper deals with water vapor in the upper troposphere. What about high-altitude cirrus clouds, which climate models also predict will incrase and trap more OLR as GHG concentrations increase?

Spencer et al. (2007) found a strong negative cirrus cloud feedback mechanism in the tropical troposphere. Instead of steadily building up as the tropical oceans warm, cirrus cloud cover suddenly contracts, allowing more OLR to escape. Dr. Roy Spencer of the University of Alabama in Huntsville, who directed the study, estimates that if this mechanism operates on decadal time scales, it would reduce model estimates of global warming by 75%.

A 2008 study by Spencer and colleague William D. Braswell examines the issue of climate feedbacks related to low-level clouds. Lower troposphere clouds tend to cool the Earth by reflecting incoming SWR. Observations indicate that warmer years have less cloud cover compared to cooler years. Modelers have interpreted this correlation as positive feedback effect in which warming reduces low-level cloud cover, which then produces more warming.

Spencer and Braswell found that climate modelers could be mixing up cause and effect. Random variations in cloudiness can cause substantial decadal variations in ocean temperatures. So it is equally plausible that the causality runs the other way, and increases in sea-surface temperature are an effect of natural cloud variations. If so, then climate models forecast too much warming. For more on this, visit Spencer’s Web site.

In a study now in peer review for possible publication in the Journal of Geophysical Research, Spencer and colleagues analyzed 7.5 years of NASA satellite data and “discovered,” he reports on his Web site, “that, when the effects of clouds-causing-temperature-change is accounted for, cloud feedbacks in the real climate system are strongly negative.” “In fact,” he continues, “the resulting net negative feedback was so strong that, if it exists on the long time scales associated with global warming, it would result in only 0.6ºC of warming by late in this century.”

In related ongoing satellite research, Spencer finds new evidence that “most” warming of the past century “could be the result of a natural cycle in cloud cover forced by a well-known mode of natural climate variability: the Pacific Decadal Oscillation (PDO).”

Whether or not the PDO proves to be a major player in climate change (about which, more in a moment), Spencer has identified a potentially serious error in all IPCC modeling efforts:

Even though they never say so, the IPCC has simply assumed that the average cloud cover of the Earth does not change, century after century. This is a totally arbitrary assumption, and given the chaotic variations that the ocean and atmosphere circulations are capable of, it is probably wrong. Little more than a 1% change in cloud cover up or down, and sustained over many decades, could cause events such as the Medieval Warm Period or the Little Ice Age.

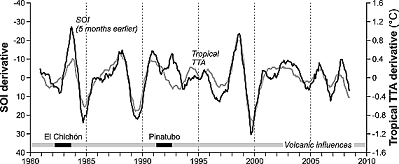

As it happens, McLean et al. (2009), a study just published in the Journal of Physical Research, finds that changes in the ocean cycle known as El Nino-Southern Oscillation (ENSO) account for 81% of troposphere temperature anomalies in the tropics since 1980.

Figure explanation: The dark line shows changes in the Southern Oscillation Index (SOI), the light line shows UAH satellite record temperature anomalies. The lag between them is five months. The indicated periods of volcanic activity were excluded from the calculations.

A key event in the longer 50-year record examined by the McLean team was the Great Pacific Climate Shift of 1976, when the PDO shifted from its negative (cooler) to positive (warmer) phase. The researchers state that the shift included a “bias” towards warm El Nino events, though “it is unclear whether this is a cause or consequence of the shift.” One thing, they say, is clear: Changes in temperature generally followed changes in ENSO by about 7 months, indicating that “ENSO is driving temperature rather than the reverse.”

The researchers conclude: “We have shown that ENSO and the 1976 Great Pacific Climate Shift can account for a large part of the overall warming and the temperature variation in tropical regions.” Specifically, changes in ENSO/PDO account for 72% of the variance in global troposphere temperature anomalies (GTTA) in the 29-year satellite record, 68% of GTTA in the 50-year weather balloon record, and, as noted, 81% of GTTA in the tropical troposphere.

Although McLean and his colleagues don’t explicitly say so, their study seems to imply that “consensus” science as represented by IPCC climate models underestimates natural climate variability and overestimates climate sensitity to greenhouse forcing.

Conclusion

Reports of the death of climate skepticism have been greatly exaggerated. Not only is the climate science debate not “over,” it is just starting to get interesting. All the basic issues–detection, attribution, and sensitivity–are unsettled and more so today than at any time in the past decade.

A final thought–anyone who wants further convincing that the debate is not over should read the marvelous NIPCC report. On a wide range of issues (nine main topics and 60 sub-topics), the report demonstrates that the scientific literature allows, and even favors, reasonable alternative assessments to those presented by the IPCC.

Nice round up. The new paper by Lindzen is especially interesting.

[…] I explain today on MasterResource.Org, all the basic science issues in the global warming debate–attribution, sensitivity, and even […]

[…] I explain today on MasterResource.Org, all the basic science issues in the global warming debate–attribution, sensitivity, and even […]

Who’s done a study on the wave action washing onto

ice and melting it, from the days of Ice Cutters to the

enormous Cargo and Oil Freighters of today. I mean,

IF we stopped would it just freeze over in a year or

two?

Who knows this subject?

Kinetic Wave Action upon Polar Ice Caps?

Please let me know?

jonjohnston@hushmail.com

[…] The Climate Science Debate Is Just Getting Interesting Marlo Lewis, MasterRsource.org, 24 July 2009 […]

[…] offer. Anyone that can give me hard facts about global warming that HAVEN’T been debunked in this paper feel free to send them to me and I’ll find at least one article outlining why it is […]

I fervently hope these rays of sunshine will penetrate the wall of mass human stupidity about a nonexistent problem and the good name of a a gas, vital to our food supply, will be restored. I hope the perpetrators of this will be deprived of their ill intended gains and given the treatment they deserve. I suggest rationing Algore to 4 hrs of electricity for his mansion and 5 gallons of fuel for his jet per day, plus indefinite confinement to his home.

[…] Marlo Lewis: July 24, 2009: Is the Climate Science Debate Over? No, It’s Just Getting Very, Very Interesting (with welcome new… […]

I have never wavered from my first conviction, made after my reading of the Kyoto Protocols, that something was deeply wrong in blaming CO2 for what seemed normal weather. Geologists, and particularly Paleontologists, tend to have knowledge of ancient weather. I began reviewing the Pleistocene ice ages and then back as far as the Cambrian, 1/2 billion years ago. But I was particularly interested in climates after liiving organisms appeared on land during and after the Devonian. The climate warmed so much during the age of the dinosaurs (200 to 65 million years ago) and CO2 out-gased from the warmed ocean depths to concentrations as high as 20 times

the amount in todays atmosphere. Both plant and animal life thrived throught this long interval, with no evidence of extinctions. This fact alone made the current global warming hysteria preposterous. The recent findings of the Competitive Enterprise Institute that our government, starting 20 years ago, has been funding research totaling over $30 billion, designed to prove that CO2, produced by man’s activities, is proof that this insane idea is deeply embedded. The result of this huge expenditure is climate models that do not work when applied to present climates, a total, predictable failure by anyone versed in science. But, the biggest scandal of all is the desire of the present administration’s effort to establish the “cap and trade” bill as a “sneak tax attack”, ostensibly to protect us from a non-existent hazard.

[…] scientific debate, meanwhile is just getting interesting. Go ahead and skim past Watts’ objections to ground temperature stations. I’m sure many […]

[…] again, another report has been issued which renders the global warming hysteria dubious, if not an outright myth. […]

The “skeptics” (other than myself) seem to be giving the AGW hypothesis more credit than it deserves. With all of the evidence refuting the basic claims of the hypothesis, it should be safe to say now that man-made CO2 as a driver of “climate change”/”global warming” has been disproven beyond any reasonable doubt. Would any other scientific hypothesis on any other subject still be considered viable. This is no longer a question of science but of economic terrorism thinly veiled as science.

[…] Panel on Climate Change (IPCC) was radically overstated. Marlo Lewis’s summary, Is the Climate Science Debate Over? No, It’s Just Getting Very, Very Interesting (with welcome new…, also lays out the latest from the quite unsettled–and nonalarmist–science. Are the […]

[…] and negative climate feedback mechanisms. As Chip Knappenberger and I have discussed in previous posts, a new observational study by MIT scientists Richard Lindzen and Yong-Sang Choi finds that […]

[…] Marlo Lewis blogged on the inadequacy of the U.S. surface temperature record in this excellent post. « Cooler Heads Digest 18 September […]

Whether we analyze the global temperature record, (and trust it) or whether we try to scientifically join all relevant data into a comprehensive, interactive database (and trust it) and then to create viable computer models, algorhythms and other extrapolation devices to calculate projections (and trust this) – well, it seems to me -from a non-scientific viewpoint, that many of you scientists spend too much time invalidating or refuting each others conclusions rather than addressing what is clearly happening with neutral observation and reporting.

For instance this little quip: “A WPO poll found a remarkable 60% of those who watched Fox News almost daily believe that “Most scientists do not agree that climate change is occurring,” whereas only 30% who never watch it believe that. Only 25% of those who watch CNN almost daily hold that erroneous belief — and only 14% who listen to NPR or PBS almost daily.”

Yes, I had to read that quip several times, too.This statement about “most scientists” is concerning, as in defining a majority – from which field exactly? Of ALL scientists worldwide, in every field? Of just a quick polling of run-around-the-block-finding-a-few-group?

The fact that global “climate” is constantly in a state of change, affected by many different sets of variables, some interacting together, others in direct contradiction to another, does not seem to matter in all those equations science and math deliver. The problem is that certain amplitudes and frequencies of recent climate changes in our immediate observation timeline are rather unusual, and might mean soon enough unsustainable conditions for a majority of the human race. (This matters!) Hence the simple term some use: “Global Climate Destabilization” might be better.

Therefore, watching any news apparently creates such a bias by the viewing audience toward not recognizing simple, clear factual data, (erg: that most scientists agree ‘Climate change is not occurring”), that we need to turn off the TV, cancel newspaper subscriptions and throw out the radio. At least then, when our house gets sucked up by a tornado, flooded by a crested river, collapsed by increased snow load, or ripped apart by a hurricane we can explain clearly that we collectively had no idea this change was approaching.

Recently the Brits -and their scientists- pointed out something about Maunder Minimum of sunspots waning, instead of what they should be doing, increasing over the next decade or so. This means our daily dose of radiation is decreasing and that perhaps, as in the early 18th century, we are now looking at the potential for a mini ice age.

http://www.earthtimes.org/climate/sun-spot-decrease-ice-age-scientists/1044/

Aha, just when we were all getting too hot under the collective scientific collar, the sun turns down the power for a while. Of course this observation is under discussion and refudiation as well…

To summarize my ranting is simple:

– We need a better protocol of dialogue and scientific reporting which reduces individual and personal bias so that factual data and concise scientific observations are not affected

-We (as in the Global Climate description) are in an open system of many different interactive programs, forces and cycles, which apparently pose great difficulty in scientific prognostication. Our comprehension lacks and obfuscation rules. Without a definitive and clear interpretation of data, every science report issued for administrative or policy-adaptive changes then has no collective bite. Without intelligent address, H.L. Mencken’s projections on human cultural behavior becomes realized, once again. Can we afford this?

In the meanwhile, very bad things are clearly occurring. Scientists, time to get your act together!